I'm always excited to take on new projects and collaborate with innovative minds.

say@niteshsynergy.com

https://www.niteshsynergy.com/

Splunk and New Relic are both popular observability / monitoring tools, but they were designed with different core strengths. The easiest way to understand them is:

Below is a clear comparison.

| Feature | Splunk | New Relic |

|---|---|---|

| Core focus | Log management, machine-data analytics, SIEM | Application Performance Monitoring (APM), full-stack observability |

| Primary users | Security teams, DevOps, SRE | Developers, DevOps, SRE |

| Data types | Logs, metrics, traces, events (strong in logs) | Metrics, traces, logs (strong in APM) |

| Deployment | Cloud + on-prem options | Mainly cloud SaaS |

| Query language | SPL (Splunk Processing Language) | NRQL |

| Visualization | Dashboards and log analytics | Strong UI for application monitoring |

| Pricing | Often expensive (data ingestion based) | Generally cheaper and simpler pricing |

| Security use cases | SIEM, threat detection | Not mainly used for security |

| Ease of use | Powerful but steeper learning curve | Easier to start and use |

Sources note that Splunk excels at log analytics and security, while New Relic focuses more on monitoring application performance and user experience.

It can ingest and analyze almost any log or event data source for troubleshooting and analytics.

It provides full-stack observability across apps, infrastructure, and networks with dashboards and alerts.

Example teams:

Example teams:

Imagine a slow web application.

New Relic

Splunk

| If your focus is… | Choose |

|---|---|

| Application performance | New Relic |

| Log analytics & security | Splunk |

| Developer observability | New Relic |

| Enterprise monitoring + SIEM | Splunk |

Splunk is a software platform used to collect, analyze, and visualize machine data and big data.

Machine data is generated by systems such as:

This data usually does not have direct business meaning, but it is very useful for:

Splunk can process unstructured, semi-structured, and structured data, then allow users to search, analyze, and visualize insights using reports and dashboards.

Over time, Splunk has evolved from a simple log analysis tool into a powerful big data analytics platform.

Splunk is a data analytics platform that collects, searches, analyzes, and visualizes machine data to monitor systems and gain insights.

The .deb file is the installation package used by Ubuntu, similar to .exe files in Windows.

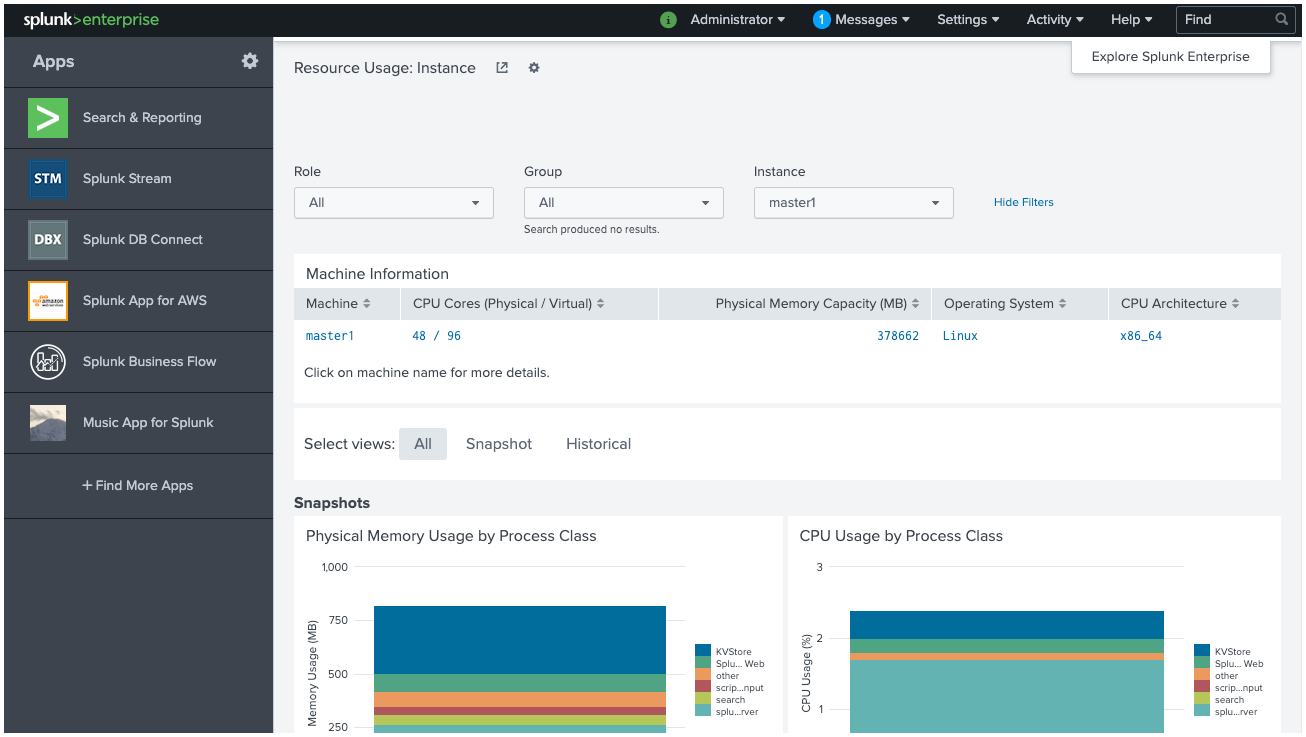

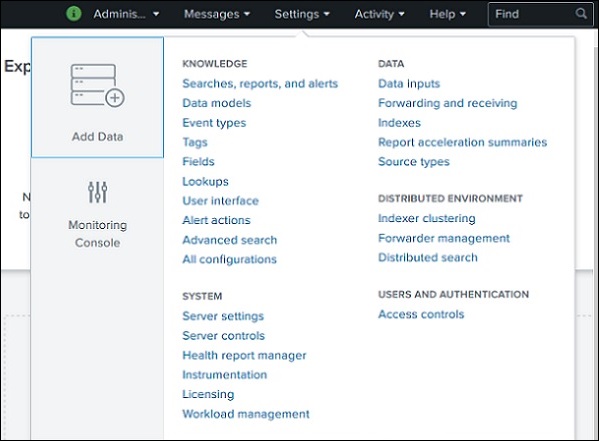

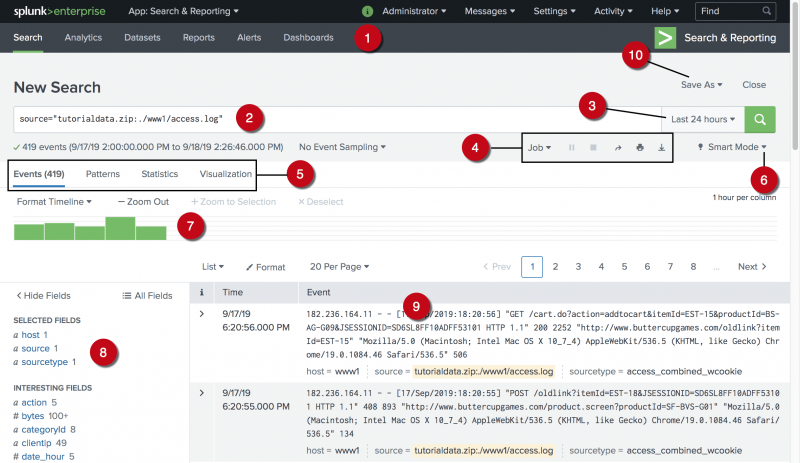

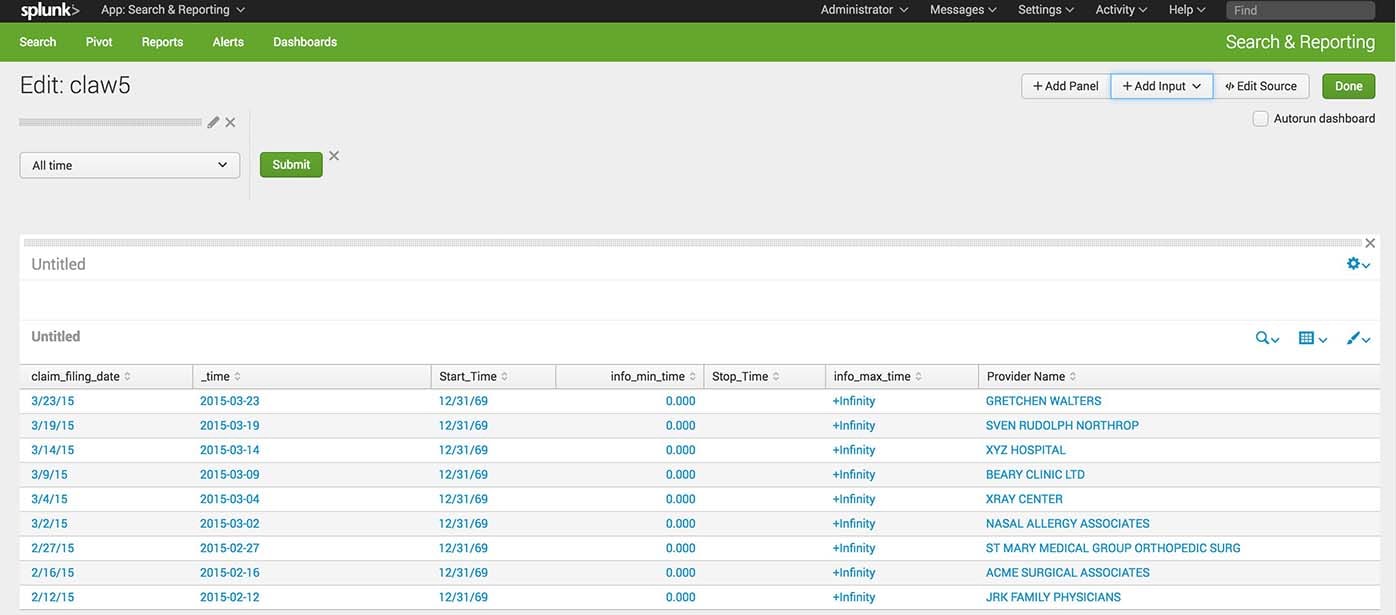

The below picture shows the initial screen after your login to Splunk with the admin credentials.

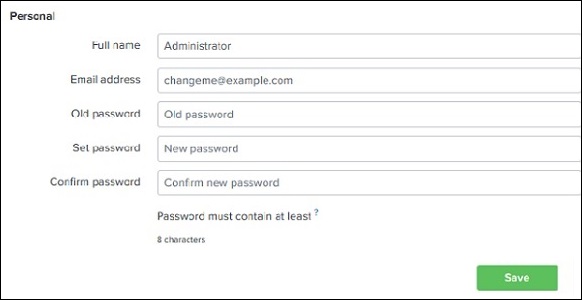

The Administrator dropdown allows management of the admin account.

Using this option you can:

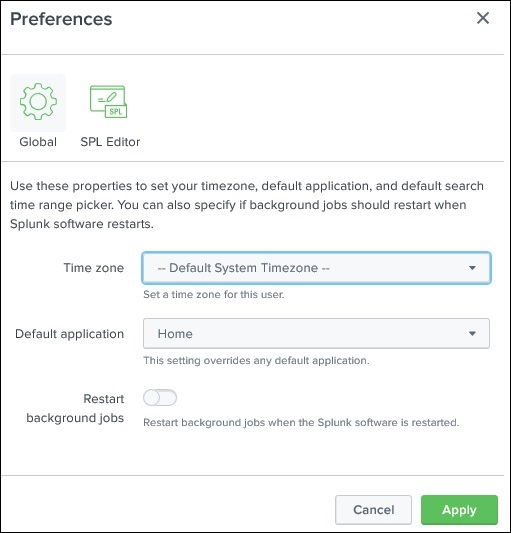

It also provides access to Preferences, where you can:

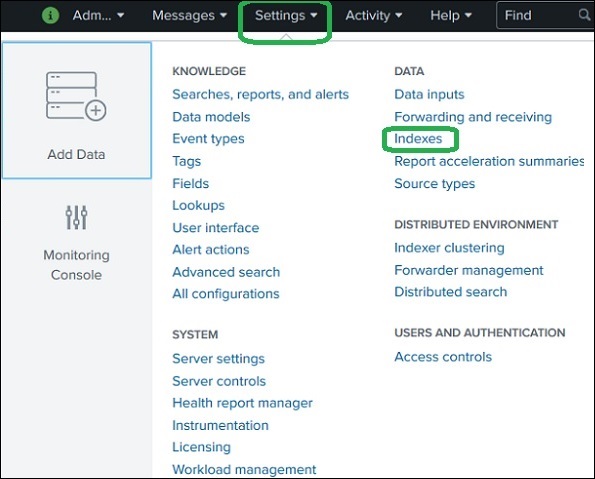

The Settings menu contains most of the core configuration features of Splunk.

From here you can:

This section is mainly used for administration and system configuration.

The Search & Reporting app is the most commonly used area in Splunk.

It allows users to:

Users write Splunk Search Processing Language (SPL) queries here to analyze data.

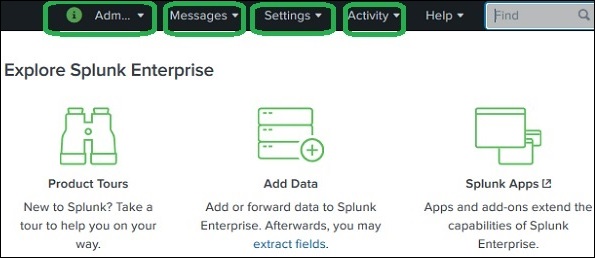

The Splunk Web Interface is a browser-based platform where users can search data, create reports, configure alerts, and manage system settings and users.

It includes key sections such as Administrator, Settings, and Search & Reporting.

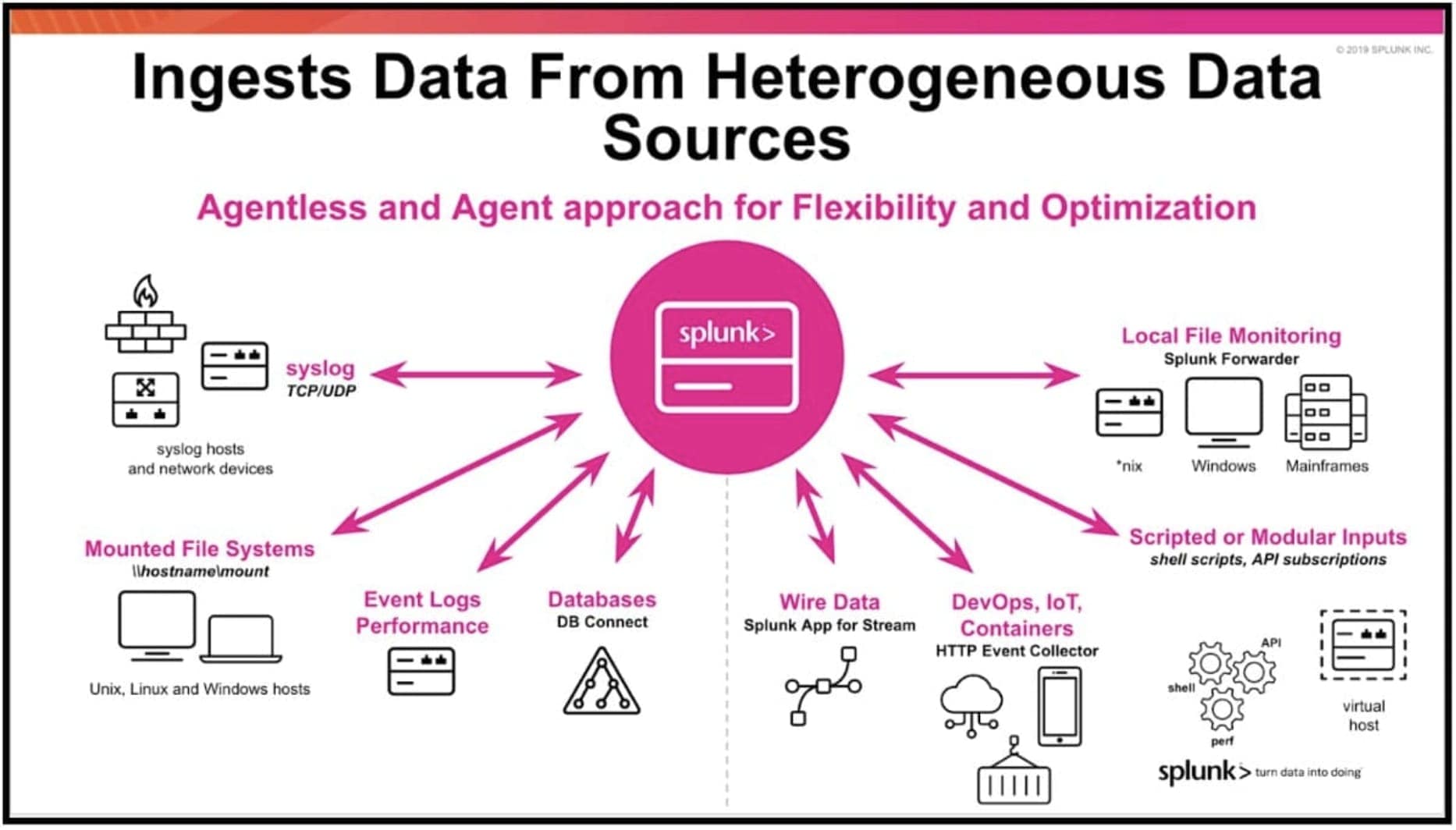

Data Ingestion in Splunk means importing or loading data into Splunk so that it can be searched, analyzed, and visualized.

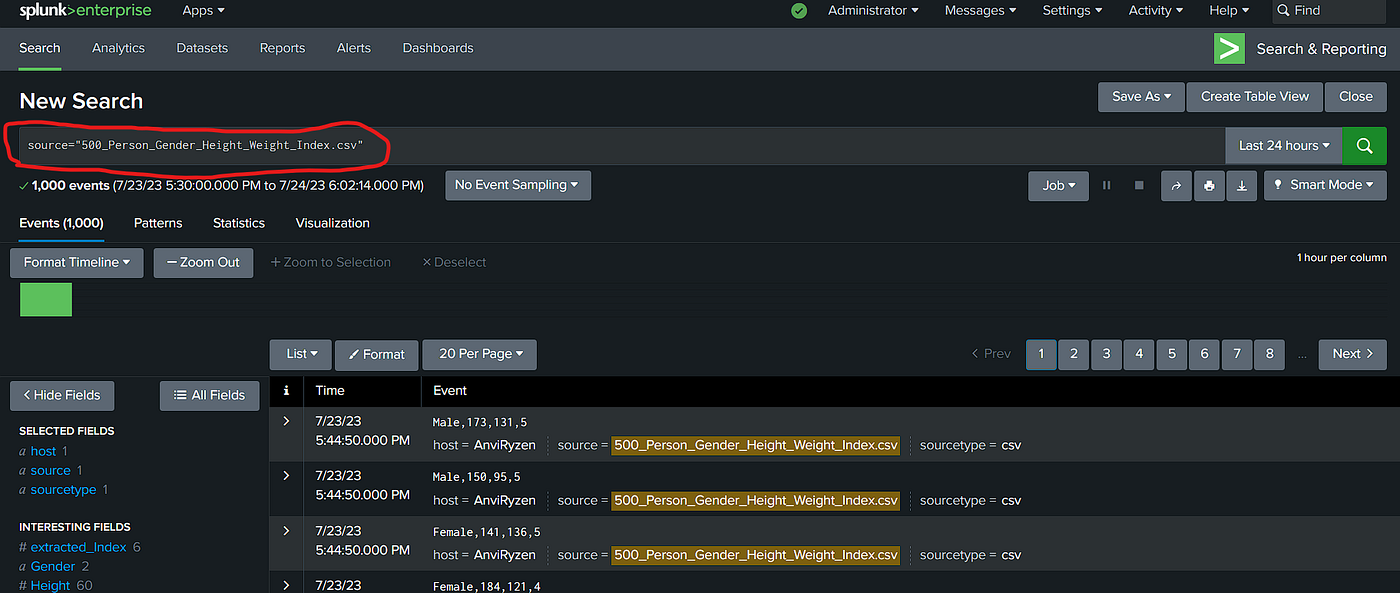

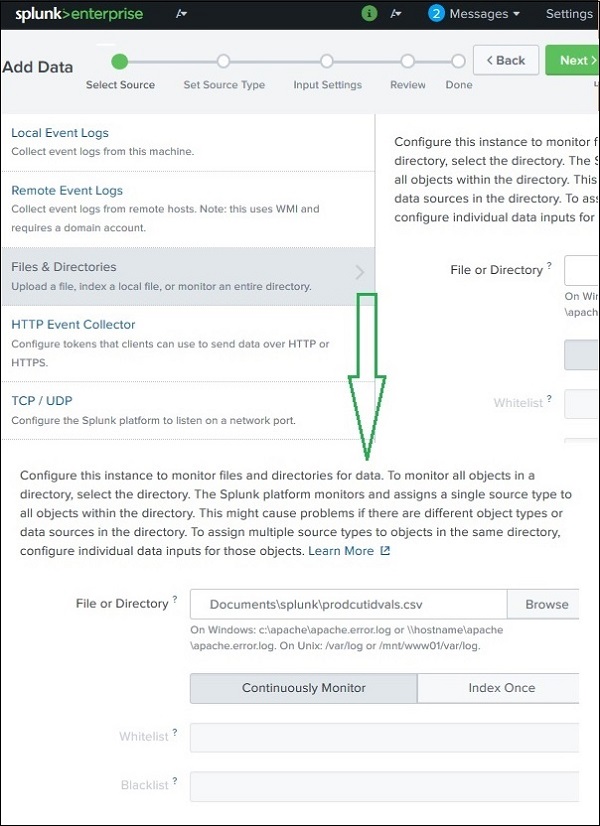

This process is done using the Add Data option available in the Search & Reporting App.

After logging in to Splunk:

This opens the data upload wizard where you select the data source.

You need a dataset to upload into Splunk.

Example:

secure.logThese log files simulate machine data for analysis.

This uploads the file into the ingestion pipeline.

Splunk automatically detects the data format.

Common source types include:

You can:

Here you configure host information for the data.

Options include:

Constant Value

Regex on Path

Segment in Path

/var/log/server1/logfileYou also choose an Index Type:

Splunk shows a summary of all selected settings.

You should:

After finishing:

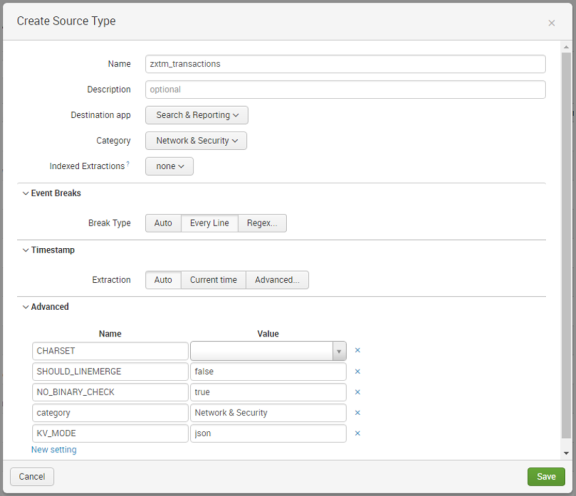

A Source Type in Splunk defines the format and structure of incoming data.

When data is ingested into Splunk, the data processing engine automatically analyzes the data and assigns it a source type. This process is called Source Type Detection.

This helps Splunk:

Example:

If Splunk receives a log file from an Apache web server, it automatically identifies it and applies the Apache log source type.

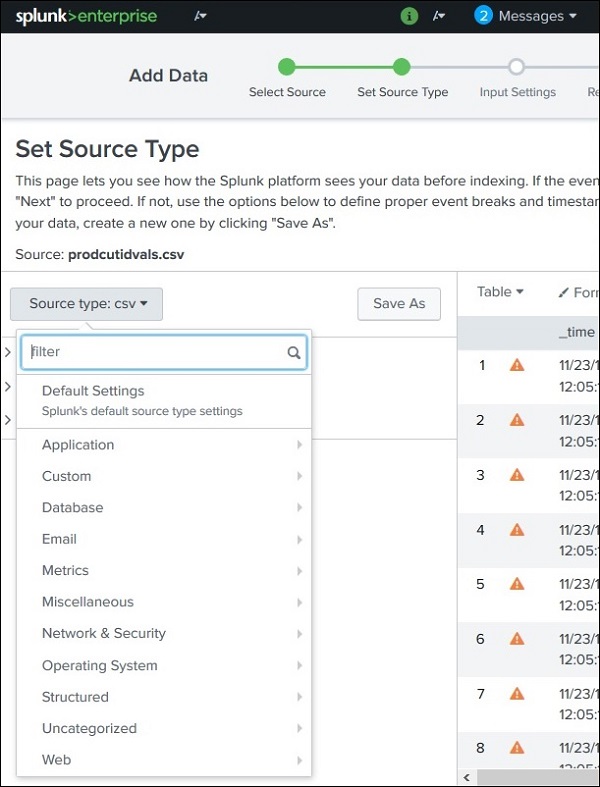

When uploading data using the Add Data feature:

These include formats such as:

Each source type category can have multiple sub-categories.

Example:

Splunk can recognize these formats and automatically extract useful fields.

Splunk includes many pre-trained source types, meaning it already knows how to interpret certain log formats.

| Source Type | Description |

|---|---|

| access_combined | HTTP web server logs in NCSA combined format |

| access_combined_wcookie | Same as combined logs but with cookie information |

| apache_error | Apache web server error logs |

| linux_messages_syslog | Linux system log messages |

| log4j | Logs generated by applications using Log4j |

| mysqld_error | MySQL database error logs |

A Source Type in Splunk identifies the format of incoming data, allowing Splunk to automatically classify logs and extract useful fields, making analysis faster and easier.

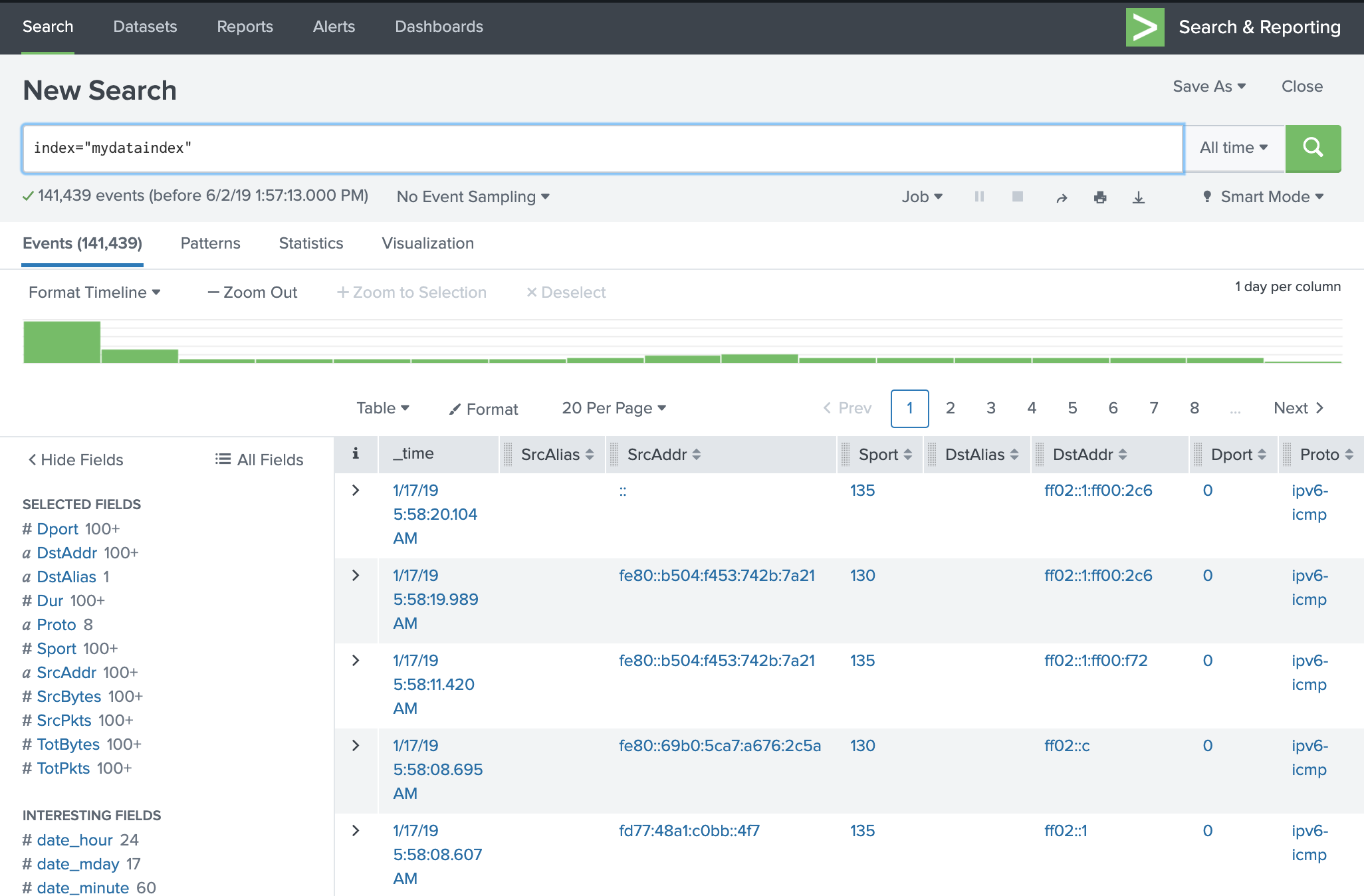

Basic Search in Splunk allows users to find specific information from the ingested data (such as log files, system events, or machine data).

Splunk uses a Search Processing Language (SPL) to perform searches. With SPL, you can filter, combine, and analyze log data quickly.

The search feature is available in the Search & Reporting App in the Splunk web interface.

After logging in to Splunk:

This is the main place where data analysis begins in Splunk.

You can search for a specific term present in the log data.

Example:

This query will show all log events generated from the host named "server1".

Results appear in:

You can combine multiple terms to make the search more specific.

Example:

Using double quotes (" ") searches for the exact phrase.

Example result:

Wildcards help search for multiple variations of a word.

Example:

Here:

fail* matches words like:AND ensures the event must also contain password.Other operators include:

Example:

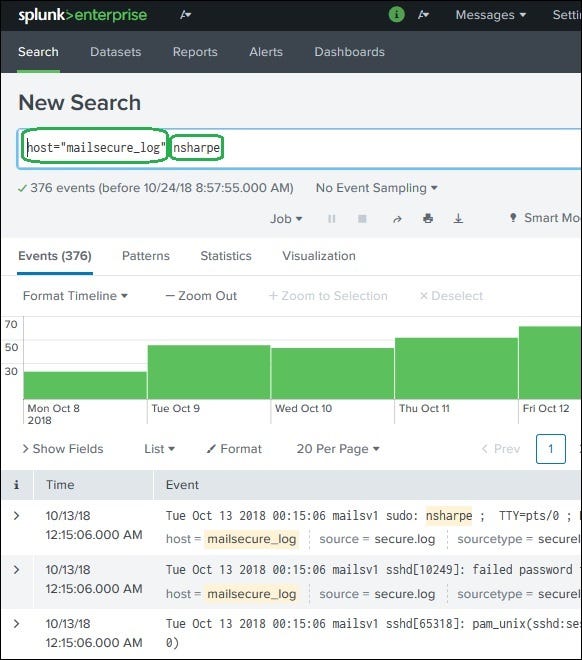

Splunk allows you to narrow down results directly from the event list.

Steps:

Example refined query:

Now only events containing 3351 will be displayed.

Also:

Timeline

Events List

Fields Panel

Basic Search in Splunk allows users to query ingested data using keywords or SPL queries. Users can combine terms, use wildcards, apply operators, and refine results to analyze log data efficiently.

When Splunk ingests machine data (like log files), it automatically analyzes the data and breaks it into fields.

A field represents a single piece of information from an event.

Example fields from a log record:

Even if the data is unstructured, Splunk tries to extract key-value pairs and separate them based on:

This automatic process is called Field Extraction.

After running a search in Splunk:

secure.log).Each field represents a column of information within the events.

You can control which fields appear in the search results.

Steps:

For every field Splunk also shows:

This helps users understand how important or common a field is in the dataset.

If you click on a field name, Splunk shows a Field Summary.

The summary includes:

Example:

Field: status

| Value | Count | Percentage |

|---|---|---|

| success | 120 | 60% |

| failure | 80 | 40% |

This helps identify patterns and trends quickly.

Fields can also be used directly in the search query to filter results.

Example:

This query returns all events generated on 15th October from the host mailsecure_log.

Another example:

This search shows failed login attempts for the root user.

Field Searching in Splunk allows users to analyze specific parts of log data by using automatically extracted fields such as host, timestamp, user, and event type, making searches more precise and efficient.

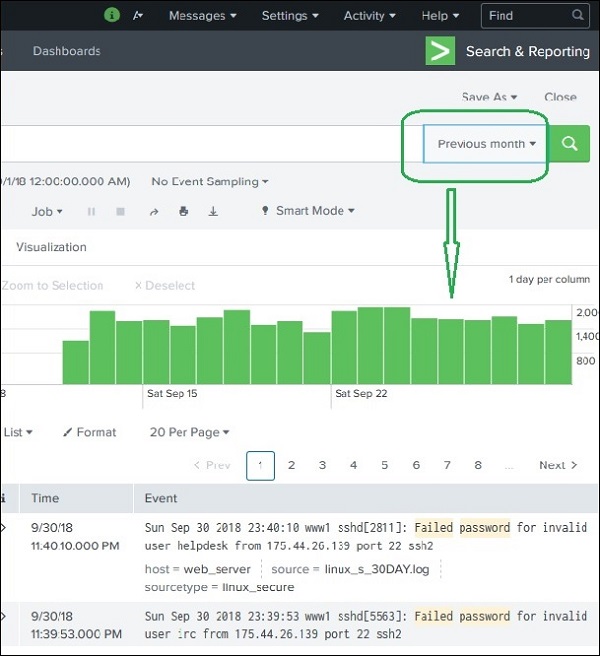

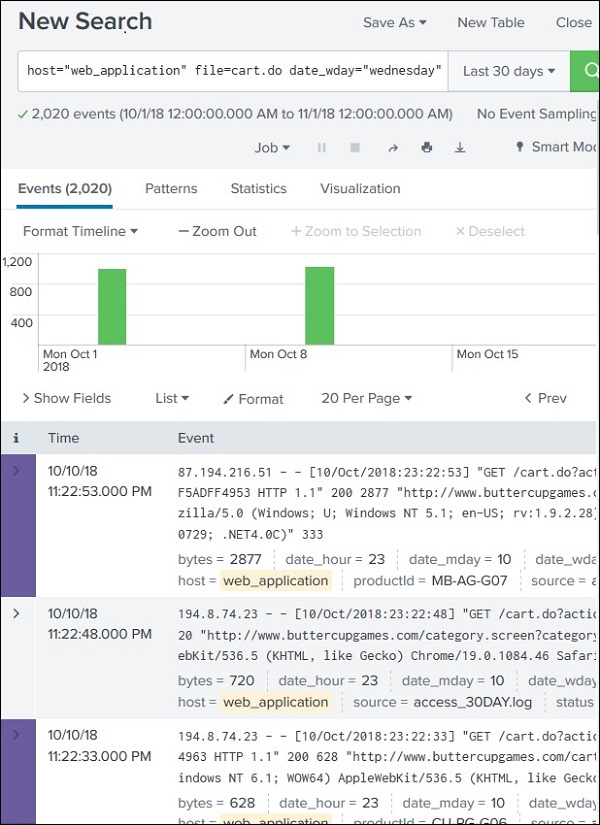

In Splunk, Time Range Search allows users to filter and analyze events based on a specific time period.

Every search result page in Splunk shows a timeline graph at the top.

This graph displays how events are distributed across time.

Using this timeline, users can limit their search results to a specific time range, making analysis faster and more accurate.

Splunk provides predefined time ranges that can be selected easily.

Common preset options include:

Example:

If you select Previous Month, Splunk will display only the events that occurred during the previous month.

The timeline graph updates automatically to show events for that selected period.

You can also manually select a portion of time from the timeline graph.

Steps:

Important:

This method is useful for quick analysis of specific time spikes.

Splunk also allows time filtering directly through search commands.

Two important commands are:

Example:

Meaning:

This method provides more precise control compared to manual timeline selection.

Splunk can also display events occurring near a specific time.

Users can specify the time interval scale, such as:

Example use case:

This helps identify related events around the same time period.

Time Range Search in Splunk allows users to analyze log events within a specific time period using preset time ranges, timeline selection, or commands like earliest and latest.

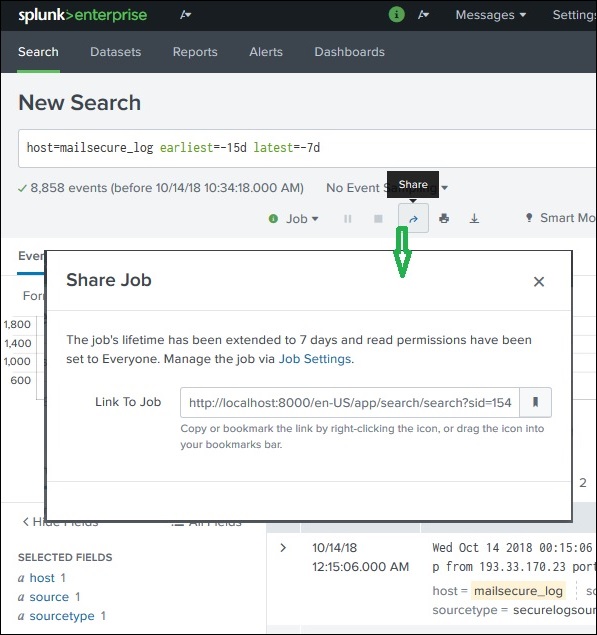

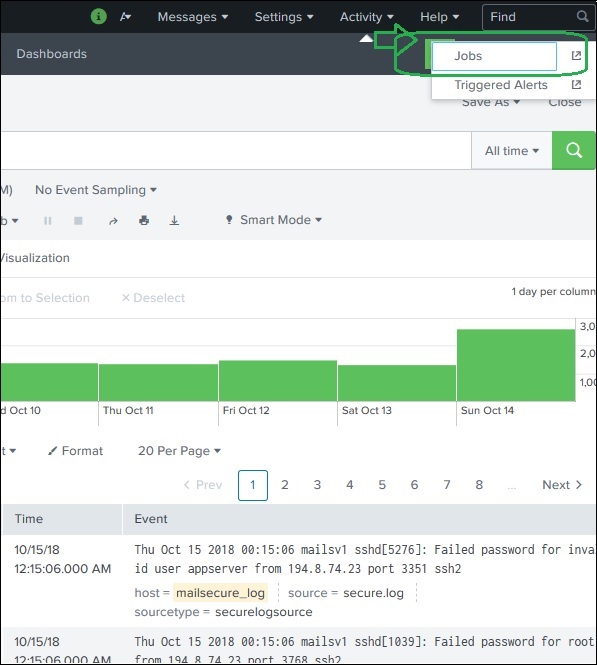

In Splunk, when you run a search query, the result is saved as a search job on the Splunk server.

These jobs can be shared with other users or exported as files so that the results can be used outside Splunk.

This feature helps teams collaborate and reuse search results without running the same query again.

After a search query finishes:

Using this link:

Important:

All executed searches are stored as jobs in Splunk.

To view them:

Each job includes:

Search jobs expire automatically after a certain time to save server resources.

If you want to keep the results longer:

This keeps the job available for future use.

Splunk also allows exporting search results into files.

Steps:

Available formats:

After selecting the format, the file is downloaded to your local system.

This allows results to be shared with people who do not use Splunk.

→ Splunk allows users to share search results through links or saved jobs, and also export results as CSV, XML, or JSON files for external use and collaboration.

SPL (Search Processing Language) is the language used in Splunk to search, filter, and analyze data.

It allows users to:

SPL queries are written in the search bar of the Search & Reporting app.

A typical SPL query uses a pipeline structure (|), where each command processes the result from the previous command.

Example:

This search finds events containing error and then shows only the first 3 results.

SPL consists of four main components:

Search terms are the keywords or phrases used to retrieve data from Splunk.

Example:

This query returns all events containing the words login and failed.

You can also search specific fields.

Example:

This shows events generated from server1.

Commands tell Splunk what action to perform on the search results.

Commands are separated by the pipe symbol |.

Example:

Explanation:

error → search term| head 3 → command showing only the first 3 resultsCommon commands include:

Functions perform calculations on fields in the dataset.

These are commonly used with commands like stats.

Example:

Explanation:

avg() calculates the average value of the field bytes.Other common functions:

sum() – total valuecount() – number of eventsmax() – maximum valuemin() – minimum valueExample:

This counts the total number of events.

Clauses help organize and rename results.

Common clauses include:

Example:

Meaning:

Example with rename:

Result:

SPL (Search Processing Language) is the query language used in Splunk to search and analyze machine data.

It includes search terms, commands, functions, and clauses to filter, calculate, and organize data results.

Search Optimization in Splunk improves the speed and efficiency of search queries automatically.

Splunk has built-in optimization mechanisms that analyze the query and adjust the search process so that results are returned faster and with less resource usage.

The two main optimization goals are:

Early filtering means removing unnecessary data as early as possible during the search process.

Instead of processing all events, Splunk first filters out irrelevant events.

Benefits:

Example search:

Splunk first filters events containing these keywords before applying further processing.

Splunk uses multiple indexers to process searches simultaneously.

Steps:

Benefits:

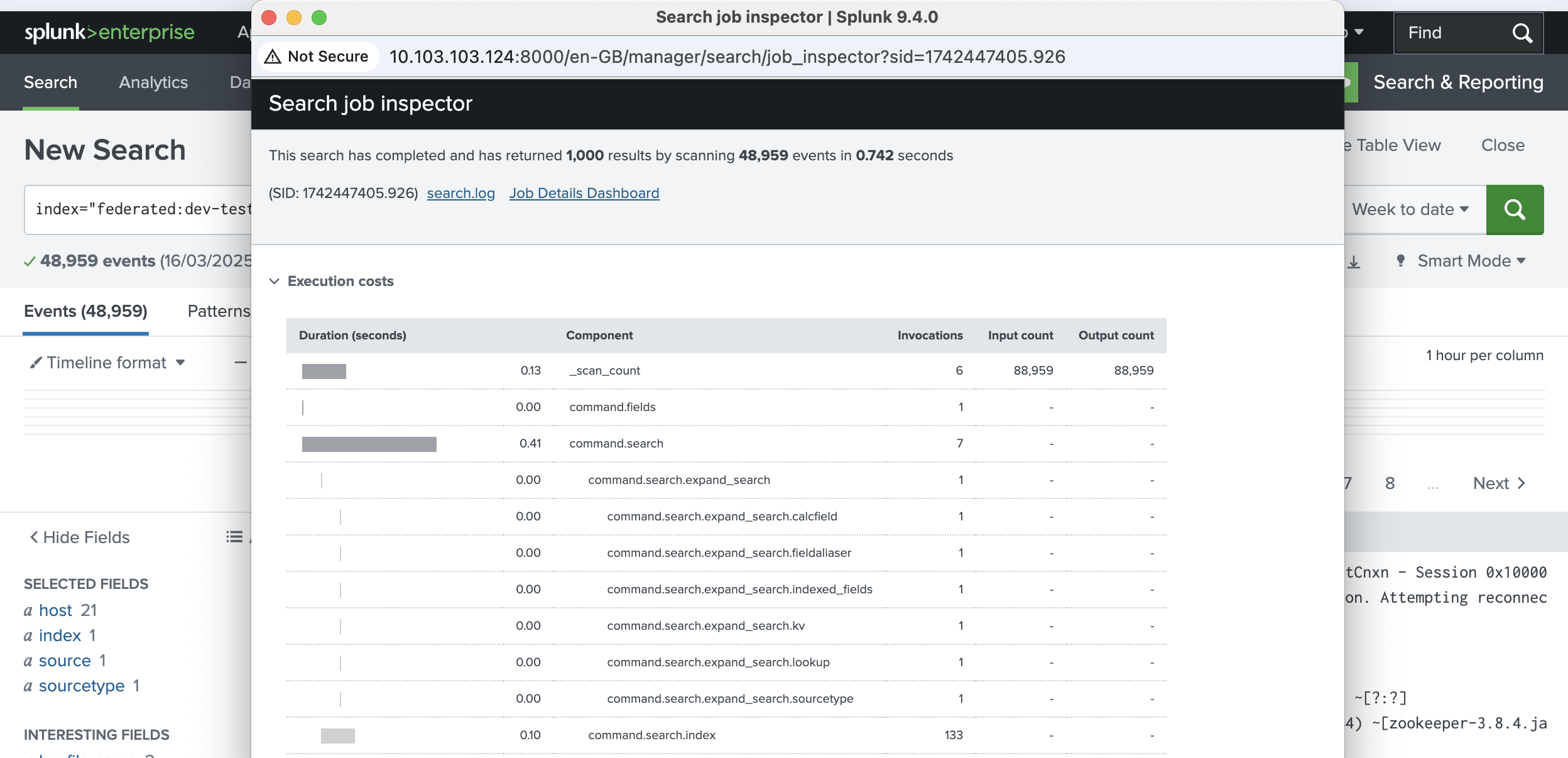

Splunk provides a tool called Job Inspector to analyze how a search was optimized.

Steps to access it:

The Job Inspector shows:

This helps users understand how Splunk executed the search.

Search query:

Using Job Inspector, you can see:

Splunk also allows disabling built-in optimization for testing purposes.

This can be done using the noop command.

Example:

Purpose:

Sometimes disabling optimization may give faster results, depending on the query.

Transforming commands in Splunk are used to convert search results into structured tables or statistical summaries.

These commands take the raw event data returned from a search and transform it into formats suitable for reports, statistics, and visualizations such as charts and dashboards.

Instead of showing individual log events, transforming commands produce aggregated results.

Some commonly used transforming commands include:

The highlight command is used to highlight specific keywords in search results.

It helps users quickly identify important terms in large datasets.

Example:

Explanation:

This improves readability and analysis of log events.

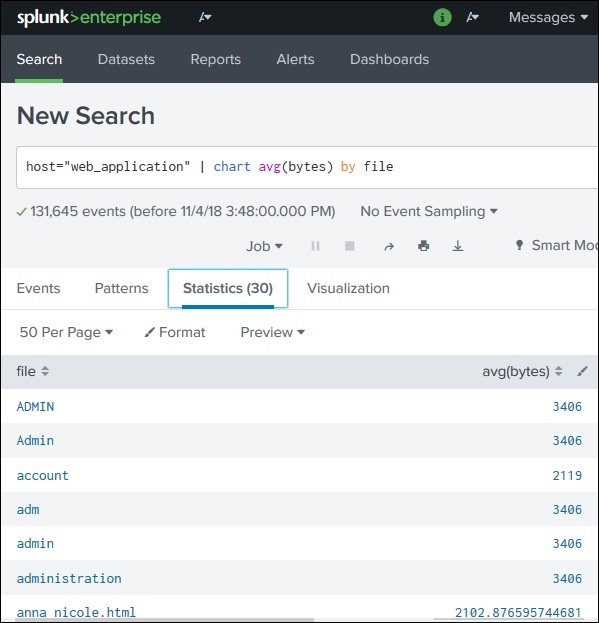

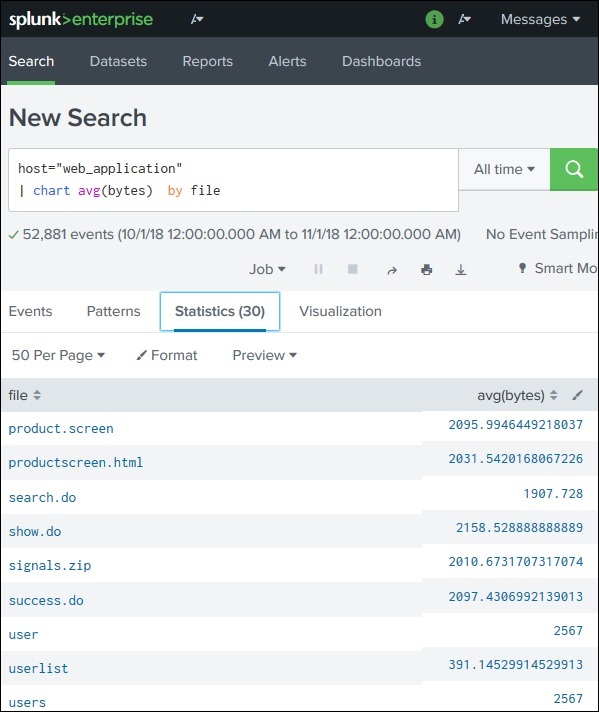

The chart command transforms search results into a table format that can be visualized as charts.

Supported visualizations include:

Example:

Explanation:

This command is commonly used for data visualization in dashboards.

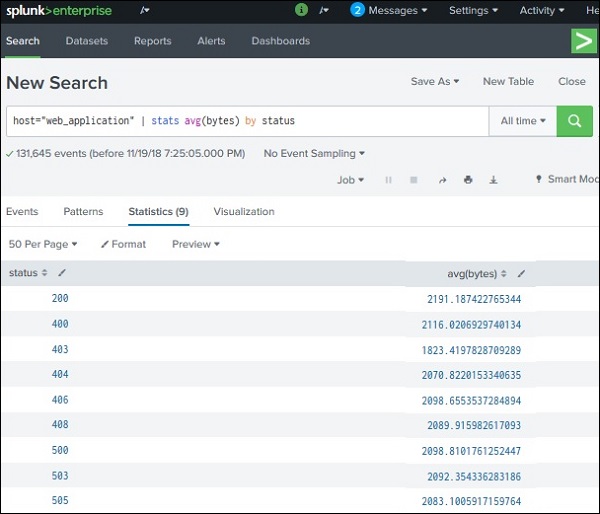

The stats command is one of the most powerful transforming commands in Splunk.

It performs statistical calculations on fields.

Common functions used with stats:

Example:

Explanation:

Output example:

| Weekday | Count |

|---|---|

| Monday | 20 |

| Tuesday | 15 |

| Wednesday | 18 |

This produces summary statistics instead of raw events.

Transforming commands in Splunk convert raw search results into structured statistical data that can be used for reports, charts, and dashboards.

Common examples include highlight, chart, and stats.

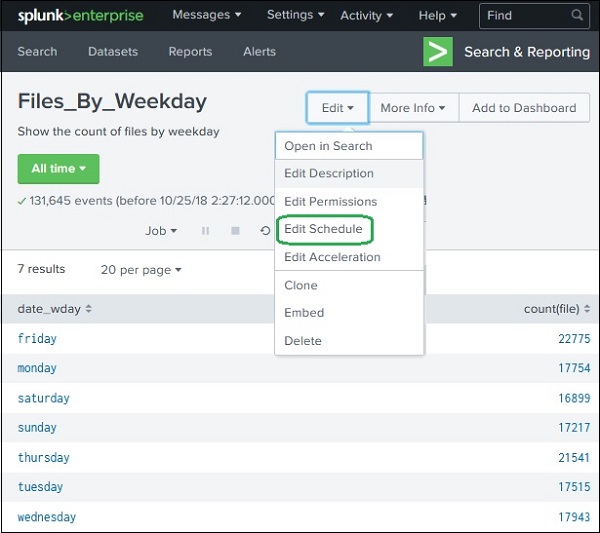

A Splunk Report is a saved result of a search query that displays statistics, tables, or visualizations based on event data.

Key points about reports:

Creating a report in Splunk is simple.

This opens the Create Report window.

You must provide:

Time Picker:

If enabled, users can change the time range when running the report.

Finally, click Save to create the report.

After saving the report, Splunk provides options to configure it.

Important configuration options include:

Define who can view or edit the report.

You can schedule reports to run automatically at specific intervals.

Example:

Scheduled reports are often used for monitoring and alerts.

Reports can be added directly to dashboards for visualization.

After creating the report, click View to open it.

The report page shows:

Users can run the report again anytime to get updated results.

Sometimes the original search query needs to be updated.

Steps to edit the search:

A Splunk Report is a saved search result that shows statistics and visualizations. Reports can be scheduled, shared with users, added to dashboards, and updated by modifying the original search query.

Phase 2 : 8AM To 12PM

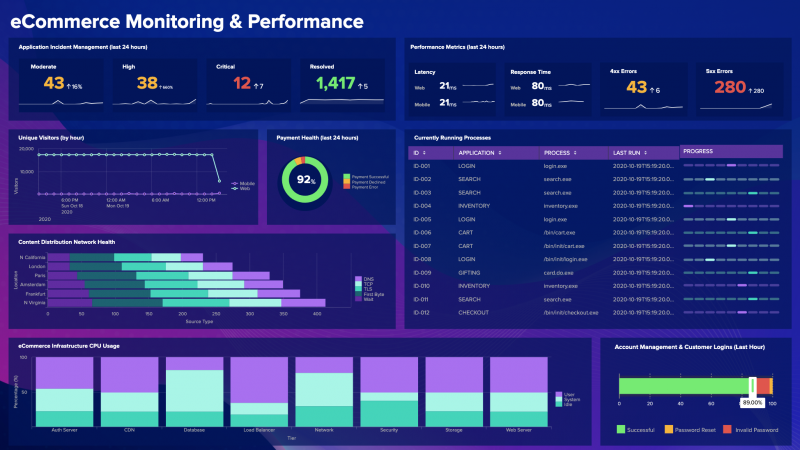

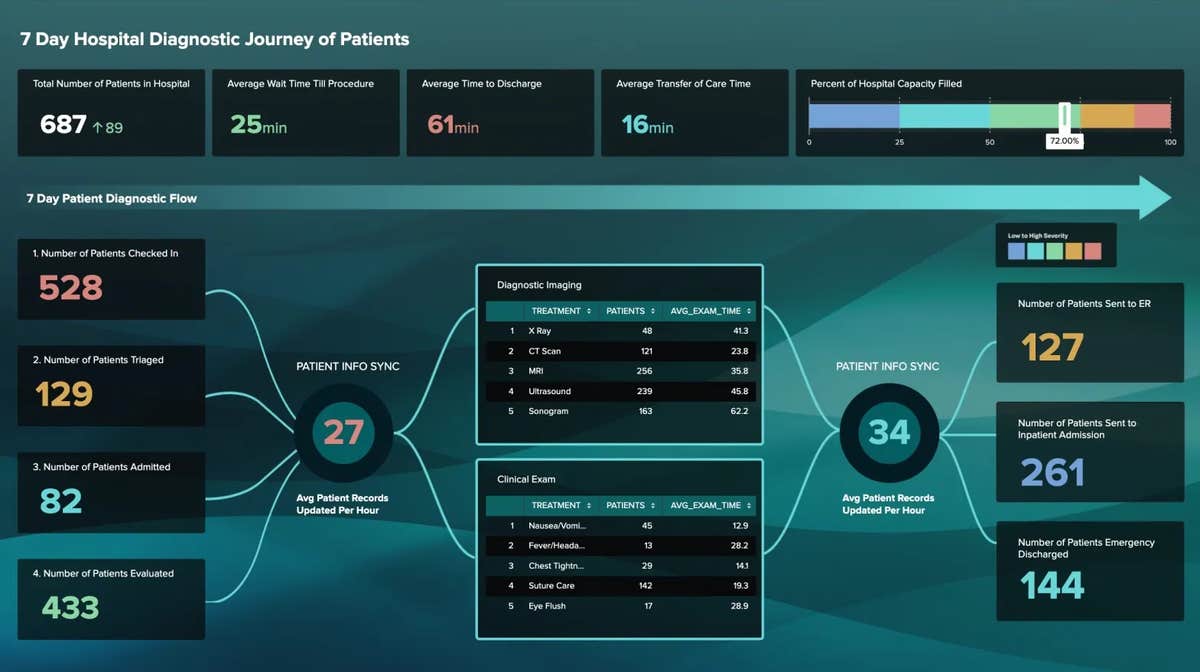

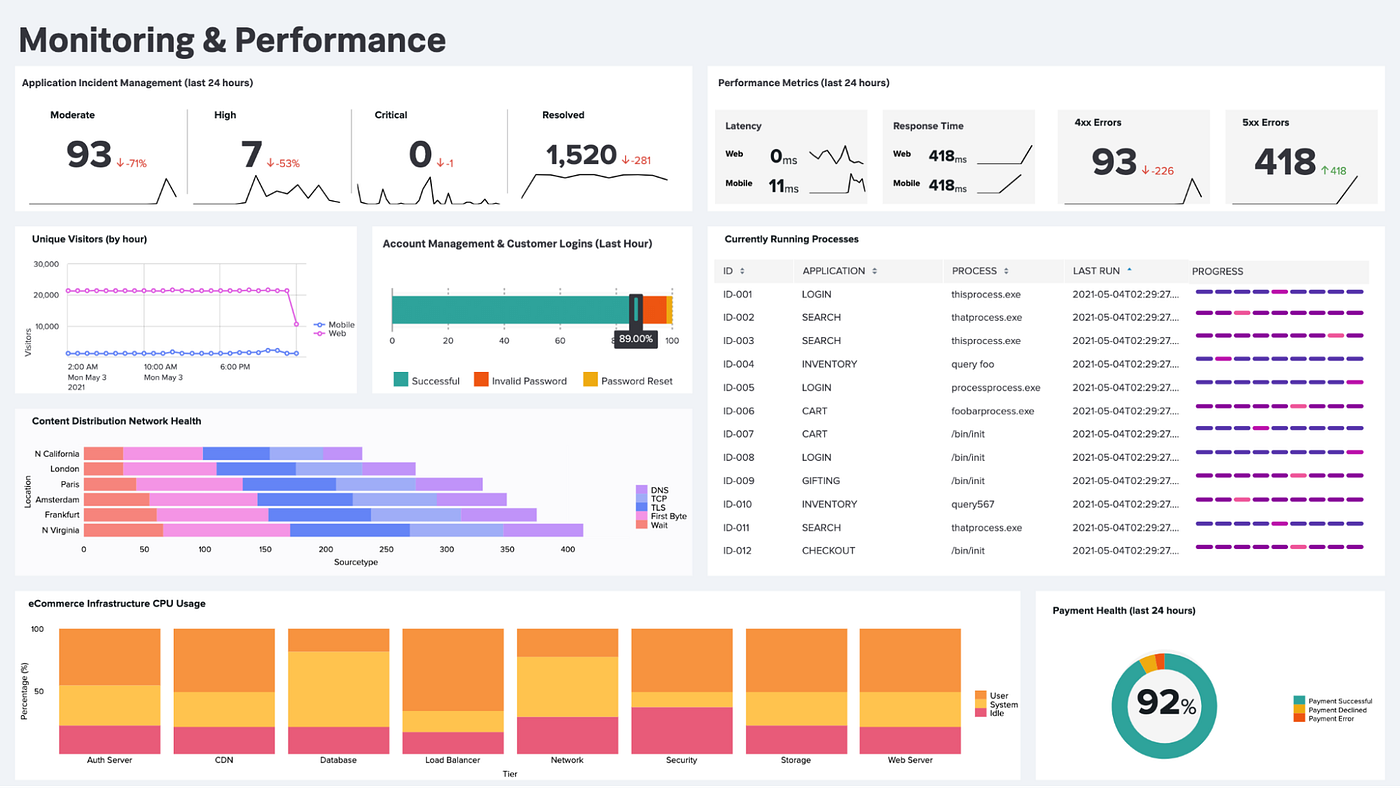

A Dashboard in Splunk is a visual interface that displays data using charts, tables, and reports.

Dashboards are used to monitor systems and analyze data quickly.

Key idea:

This helps present important information in a visually organized way.

Example panels may show:

Dashboards are usually created from search results and visualizations.

This will open the Create Dashboard Panel window.

In the next screen you must enter:

You can either:

Then click Save.

After saving:

Dashboard options include:

Dashboards can contain multiple panels.

To add another panel:

Now the dashboard will display multiple charts in different panels.

Example:

Splunk dashboards also support interactive inputs, such as:

These allow users to filter dashboard data dynamically.

A Splunk Dashboard is a visual display of reports and charts organized into panels.

Dashboards help users monitor systems, analyze trends, and present data visually.

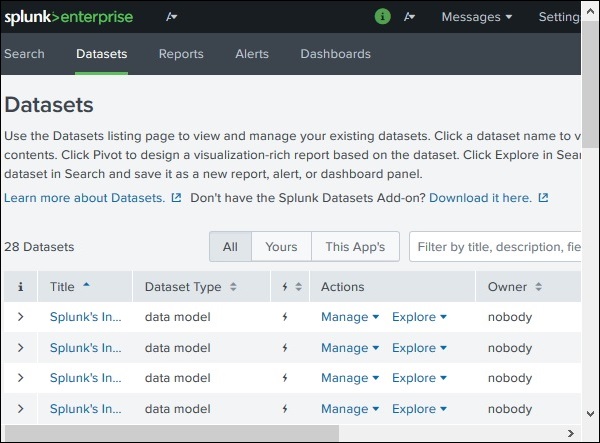

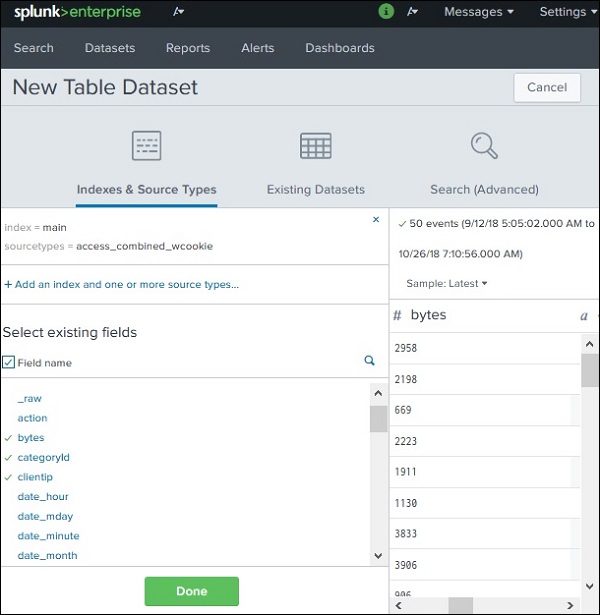

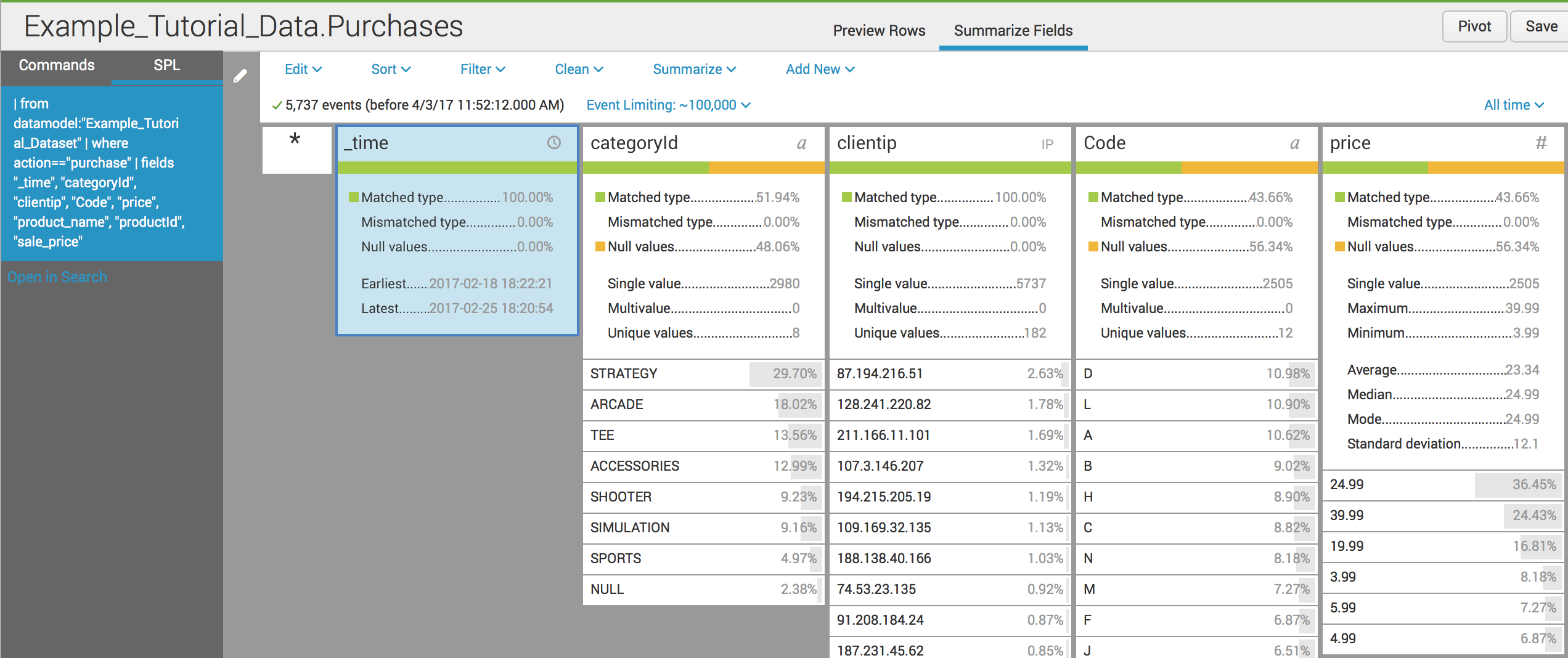

A Dataset in Splunk is a structured collection of data fields, similar to a table in a relational database.

Datasets make it easier to:

Instead of working directly with raw logs, users can work with organized datasets containing selected fields.

Datasets are created using the Splunk Datasets Add-on.

When creating a dataset, Splunk provides three options:

Use data that already exists in Splunk.

Example:

Create a new dataset from an existing dataset.

Use the results of a search query as the dataset.

Example:

In many cases, users select an existing index as the data source.

After selecting the source, Splunk asks which fields should be included in the dataset.

Example fields:

_time (default field – cannot be removed)bytescategoryIDclientIPfileThese fields become columns in the dataset table.

After selecting the fields, click Done.

The dataset now looks like a structured table.

Finally, click Save As to store the dataset.

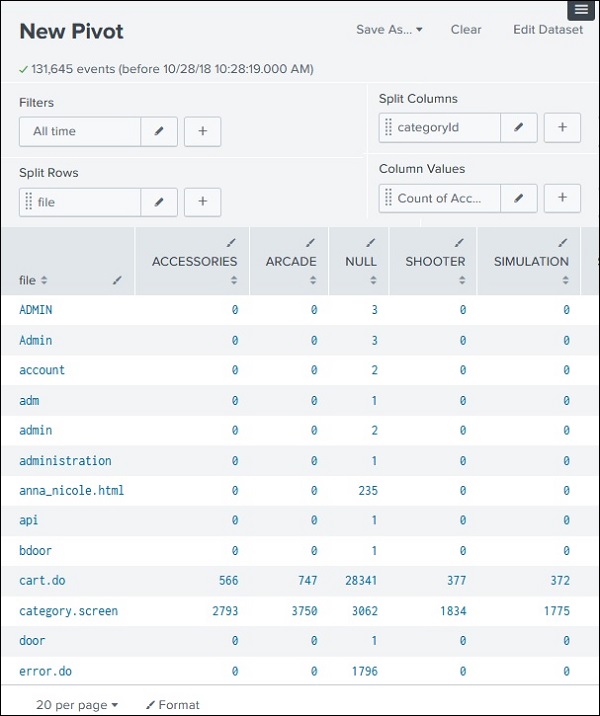

A Pivot is a tool used to summarize and analyze dataset information.

Pivot reports perform aggregation, similar to pivot tables in Excel.

Example:

Go to the Datasets tab and select your dataset.

Click Actions → Visualize with Pivot.

In the pivot editor you define:

Split Columns

Example:

Split Rows

Example:

The pivot table will show counts of each categoryID for each file.

Example:

| File | Category 1 | Category 2 | Category 3 |

|---|---|---|---|

| file1 | 5 | 2 | 3 |

| file2 | 4 | 1 | 6 |

This allows users to quickly analyze patterns in the data.

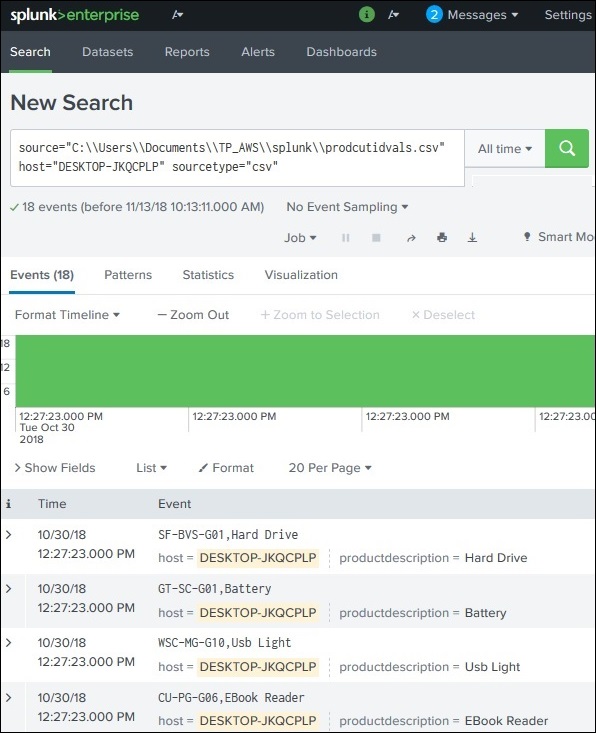

A Lookup in Splunk is used to enrich search results by adding additional information from another dataset.

Sometimes log data contains codes or IDs that are not easy to understand.

For example:

| productid |

|---|

| WC-SH-G04 |

| DB-SG-G01 |

These values do not clearly explain the product.

Using a lookup table, we can map these IDs to meaningful descriptions.

Example lookup result:

| productid | productdescription |

|---|---|

| WC-SH-G04 | Tablets |

| DB-SG-G01 | PCs |

This process of matching fields from two datasets is called a Lookup.

First, create a CSV file containing the mapping values.

Example: productidvals.csv

Important rule:

The field name must match the field in the dataset (productid).

Steps:

productidvals.csv).Now the CSV file becomes a lookup table in Splunk.

A Lookup Definition tells Splunk how to use the lookup table.

Steps:

Now Splunk knows which file to use for the lookup process.

Next, enable the field for searching:

Now you can use the lookup field in your search.

Example:

Result:

The search result will now display product names instead of just IDs.

A Lookup in Splunk is used to add meaningful information to search results by matching fields with values from another dataset (usually a CSV file).

Example:

This improves data readability and analysis.

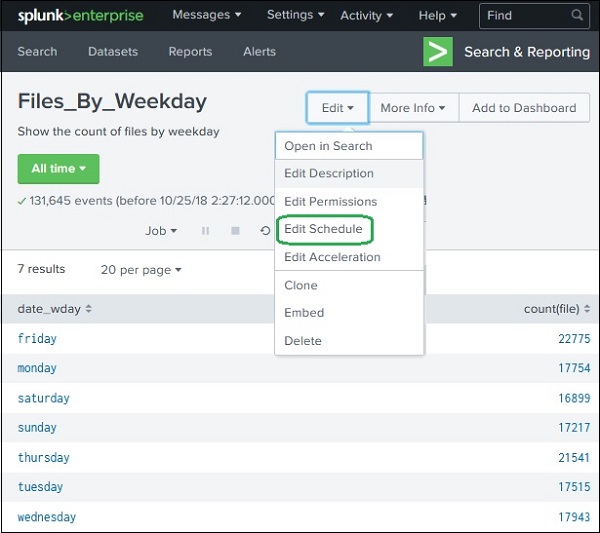

In Splunk, Scheduling and Alerts help automate monitoring tasks.

These features are widely used in system monitoring, security operations, and performance tracking.

Scheduling means running a report or search automatically at predefined intervals.

Steps:

You will see scheduling options.

Example configuration:

Defines the data time period used in the report.

Examples:

Determines which report runs first when multiple reports are scheduled at the same time.

Higher priority reports run earlier.

Allows a report to run within a flexible time window.

Example:

This helps balance system load.

After a scheduled report runs, Splunk can perform actions.

Examples:

This is configured using the Add Actions option.

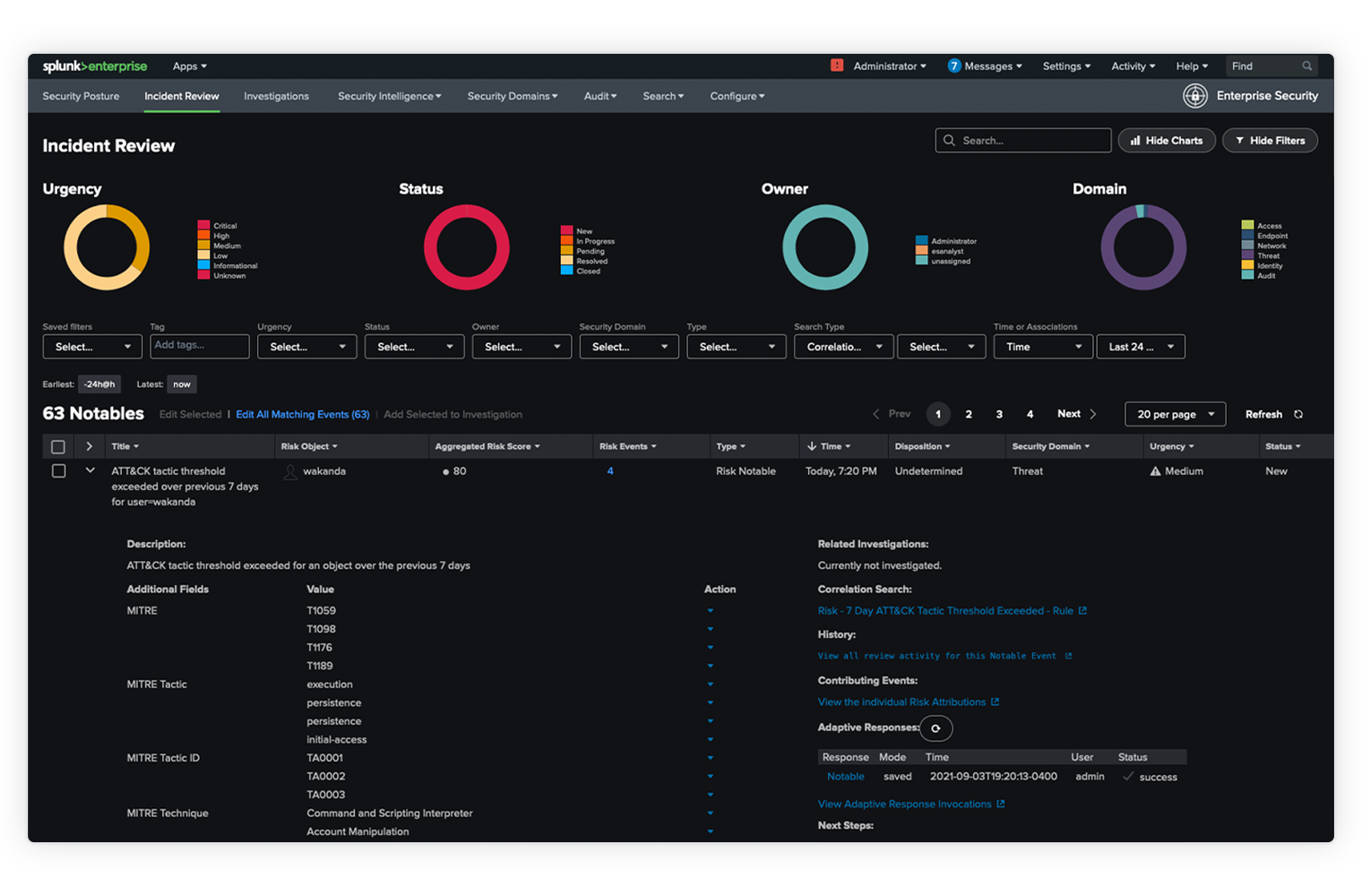

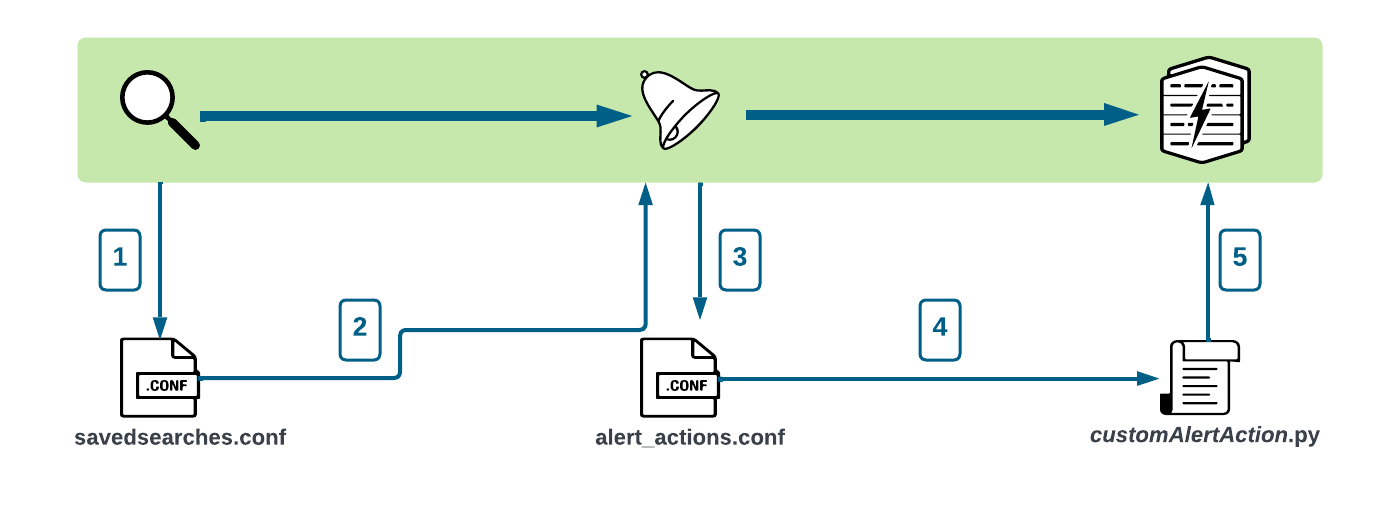

Alerts are automatic actions triggered when specific conditions occur in search results.

Alerts help detect:

Example:

Steps:

This opens the Alert Configuration screen.

Name of the alert.

Explanation of what the alert monitors.

Defines who can:

Options:

Two types of alerts:

Scheduled Alert

Real-Time Alert

Defines when the alert should activate.

Examples:

Options include:

When the alert condition is satisfied, Splunk can perform actions such as:

Simple Summary

Knowledge Management (KM) in Splunk is the process of organizing, managing, and sharing knowledge objects so users can better understand and analyze machine data.

It helps transform raw machine data → meaningful information by adding structure, fields, tags, lookups, and data models.

A Knowledge Object is a Splunk configuration or object that adds meaning to raw data.

When creating a knowledge object, it can be:

When using Splunk regularly, many knowledge objects are created.

Without management, this can cause:

Knowledge Management helps:

Fields are individual pieces of information extracted from raw data.

Example log:

Extracted fields:

| Field | Value |

|---|---|

| IP | 192.168.1.1 |

| user | admin |

| status | failed |

Two types:

Automatic extraction

Manual extraction

These fields help structure raw logs into searchable data.

Used to group similar events together.

Example:

This may include all logs containing failed login attempts.

A transaction groups related events across time.

Example:

All actions belong to one user session.

Lookups add extra information from external datasets.

Example:

| productid | productname |

|---|---|

| P01 | Laptop |

Lookup adds productname to search results.

Sources:

Workflow actions allow interaction with external tools or resources.

Example:

This helps integrate Splunk with other applications.

Tags are used to group related events or fields.

Example:

Hosts in New York office may all receive tag:

This allows easy filtering.

Aliases are used when different field names represent the same data.

Example:

| Original Field | Alias |

|---|---|

| clientip | ipaddress |

Now both fields refer to the same information.

This helps normalize data across different sources.

A Data Model is a structured representation of datasets.

It helps users analyze data without writing SPL queries.

Data models are mainly used by:

Example data model structure:

Users can easily generate:

Knowledge Management in Splunk organizes and manages objects that give meaning to machine data.

Main knowledge objects include:

| Knowledge Object | Purpose |

|---|---|

| Fields | Extract information from logs |

| Event Types | Group similar events |

| Transactions | Link related events |

| Lookups | Add external data |

| Tags | Group related fields/events |

| Aliases | Normalize field names |

| Data Models | Structured datasets for analysis |

Knowledge Management helps convert raw machine data into structured, meaningful, and searchable information in Splunk.

A Subsearch is a search inside another search where the result of the inner search becomes the input for the outer search.

It is similar to a Subquery in SQL.

Key idea:

Important rule:

In Splunk, subsearches are written inside square brackets.

Example structure:

Flow of execution:

Goal:

Find events where the file size is equal to the maximum file size and occurred on Sunday.

Steps involved:

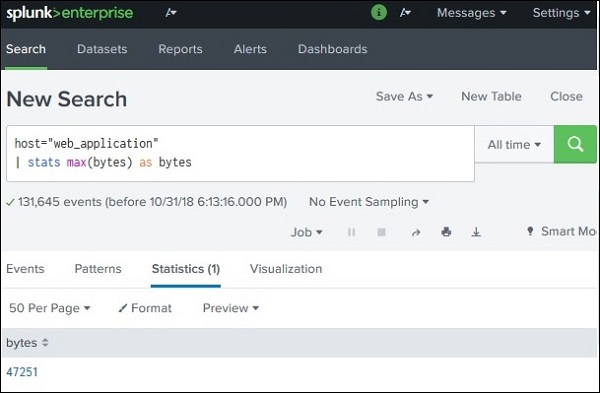

We first calculate the maximum file size.

Example:

Explanation:

stats → statistical commandmax(bytes) → finds the maximum value of the bytes fieldResult example:

| max(bytes) |

|---|

| 10500 |

This value will be used by the main query.

Now we insert the subsearch into the main query.

Example:

But usually we combine it with filters like day of week.

Example query:

Execution steps:

1. Splunk runs the subsearch:

Result:

2. Splunk inserts that result into the main search.

Final search becomes something like:

3. Splunk returns only events where:

| Feature | Description |

|---|---|

| Execution Order | Subsearch runs first |

| Syntax | Written inside [ ] |

| Result Use | Used as input for main search |

| Similar Concept | SQL Subqueries |

Example:

Find users who logged in successfully and are listed in another dataset.

Here:

✔ Allows dynamic filtering

✔ Supports complex queries

✔ Useful for data correlation

✔ Reduces manual query updates

Subsearch in Splunk is a search nested inside another search where:

[ ]Example:

This technique helps create dynamic and advanced searches.

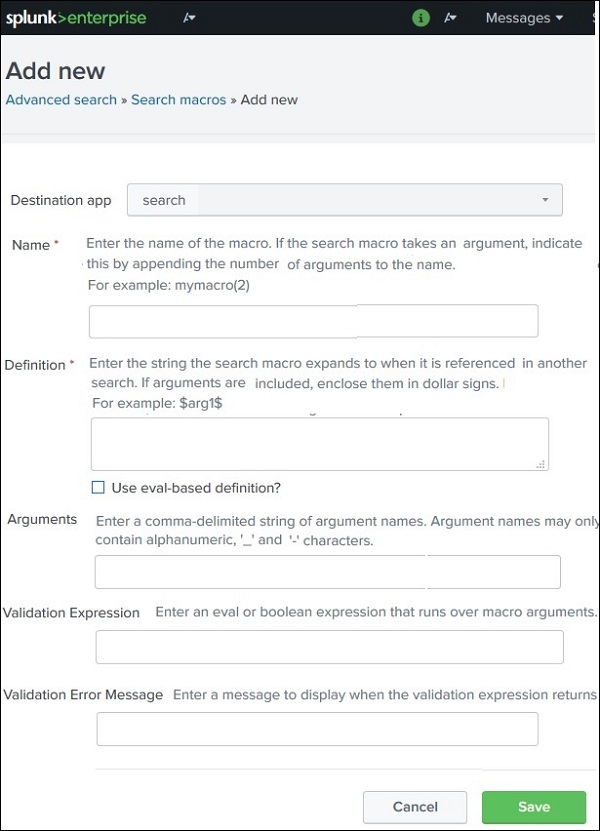

Search Macros in Splunk are reusable pieces of SPL (Search Processing Language) that can be inserted into multiple searches.

Instead of writing the same long SPL query again and again, you can create a macro and reuse it.

Think of a macro like a function in programming.

Example idea:

Splunk will replace the macro with the defined SPL query when the search runs.

Search macros help to:

Example use cases:

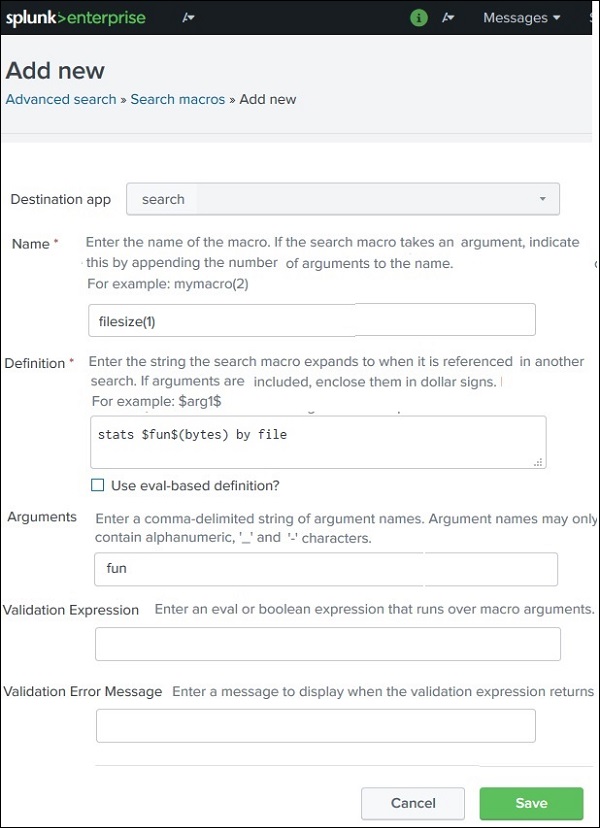

Steps to create a macro:

This opens the macro creation configuration page.

Goal:

We want to calculate statistics of file sizes from logs.

Using the field:

We want to dynamically calculate:

Instead of writing separate SPL queries, we create one macro.

Macro name example:

Explanation:

(1) means one argument is requiredArgument name:

Macro definition SPL:

Here:

$fun$ is replaced by avg, max, or minMacros are used inside backticks ( ).

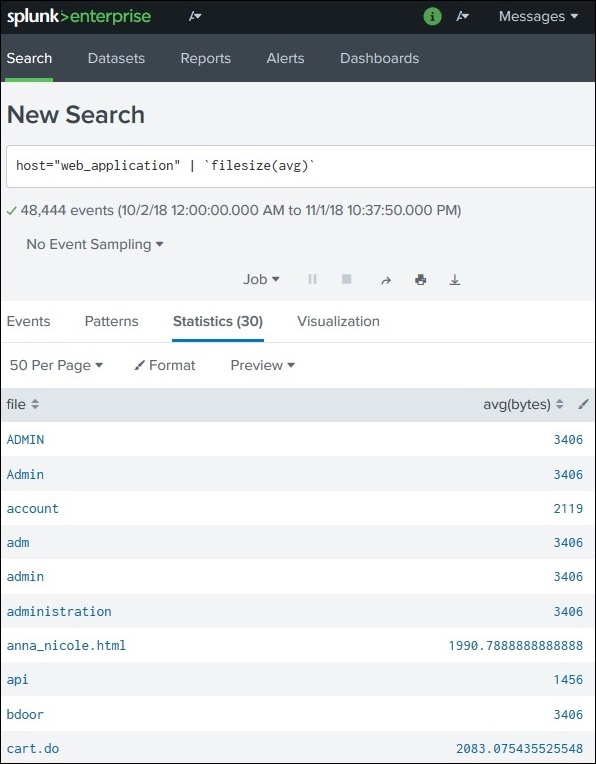

Search:

Output:

| file | avg(bytes) |

|---|---|

| file1.html | 520 |

| file2.jpg | 1020 |

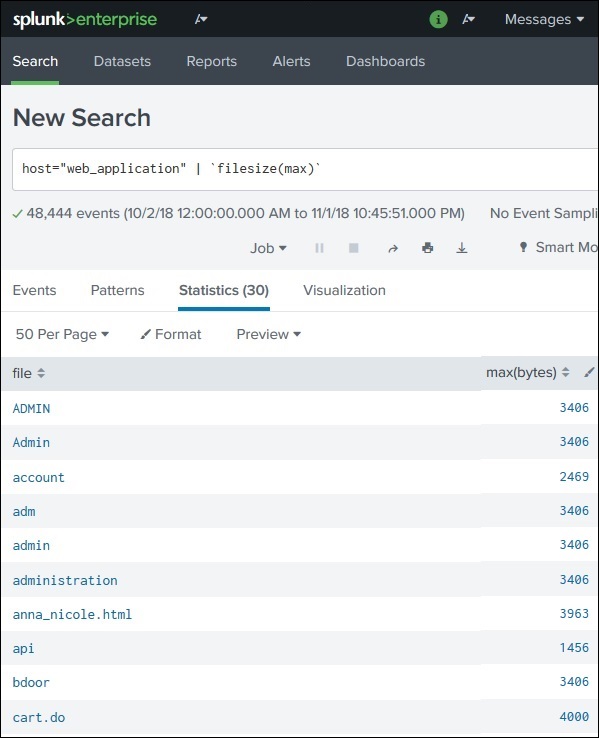

Search:

Output:

| file | max(bytes) |

|---|---|

| file1.html | 980 |

| file2.jpg | 2100 |

Search:

Output:

| file | min(bytes) |

|---|---|

| file1.html | 200 |

| file2.jpg | 500 |

| Element | Description |

|---|---|

(1) | Number of macro arguments |

$argument$ | Used inside macro definition |

`macro_name()` | Used to call macro |

Example:

✔ Reusable SPL code

✔ Shorter search queries

✔ Easier maintenance

✔ Dynamic arguments support

✔ Useful for large Splunk environments

A Search Macro is a reusable SPL block that acts like a function.

Key points:

Example:

This helps reuse complex search logic easily across multiple queries.

An Event Type in Splunk is a saved search that categorizes a specific group of events based on defined criteria.

Instead of repeatedly writing the same search query, you can save the search as an event type and reuse it later.

In simple words:

Event Type = Named search that identifies a specific group of events.

Suppose your logs contain HTTP status codes.

You want to identify all events where:

This means the HTTP request was successful.

Instead of searching every time like this:

You can create an event type called:

Now you can simply search:

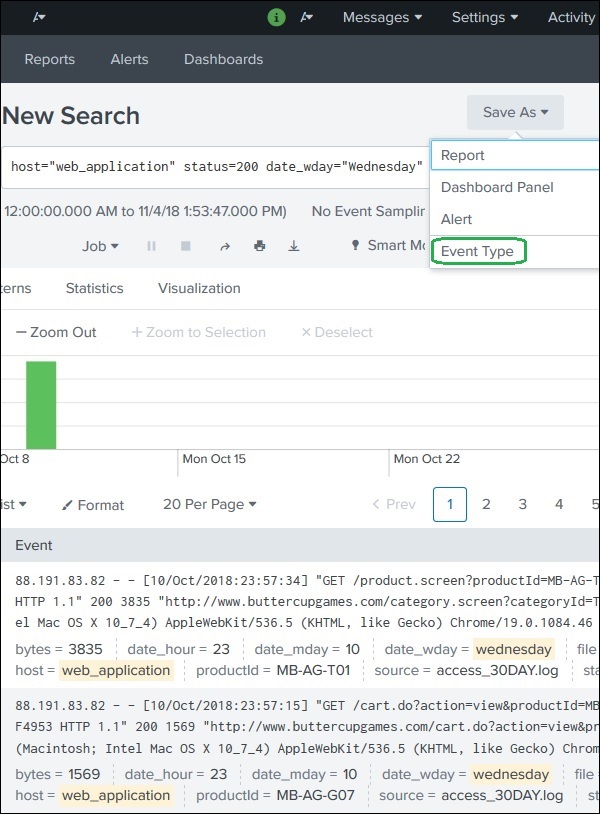

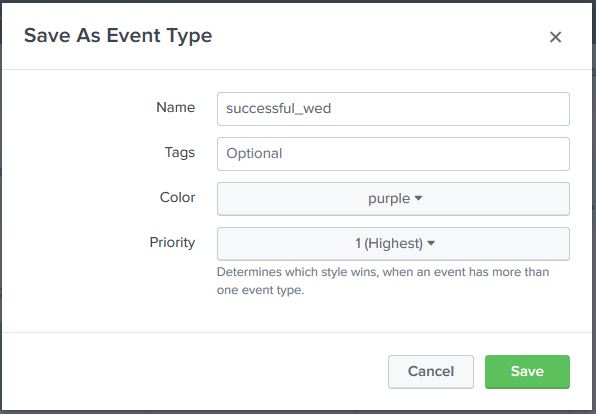

There are two main methods.

Step-by-step process:

Example:

Then configure the event type.

Name

Example:

Tags (Optional)

Tags help categorize event types.

Example:

Color

Used to highlight matching events in search results.

Example:

Priority

If multiple event types match the same event, priority decides which event type appears first.

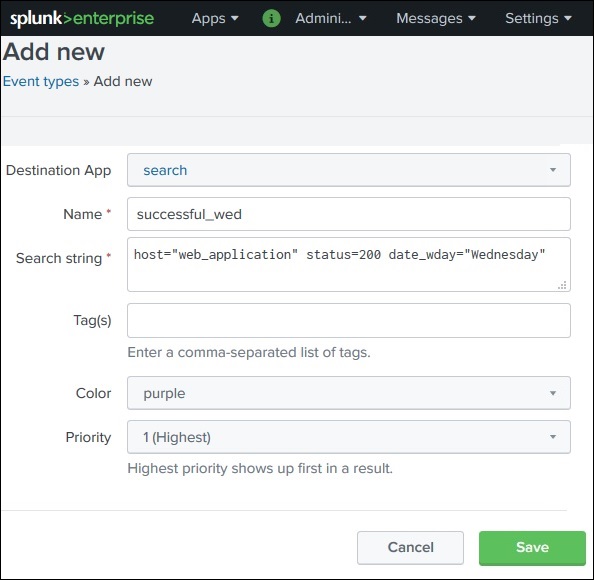

Another method:

Steps:

Then enter the same search query manually.

Example:

After saving, the event type becomes available for future searches.

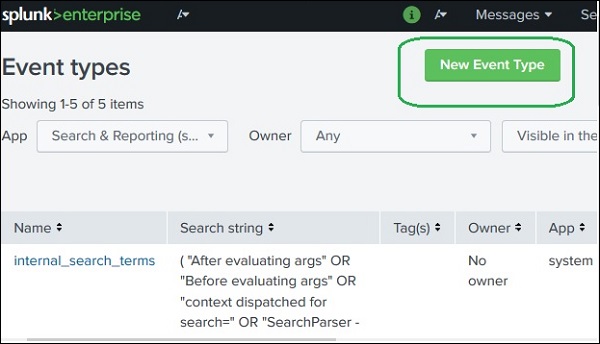

To view existing event types:

This page shows:

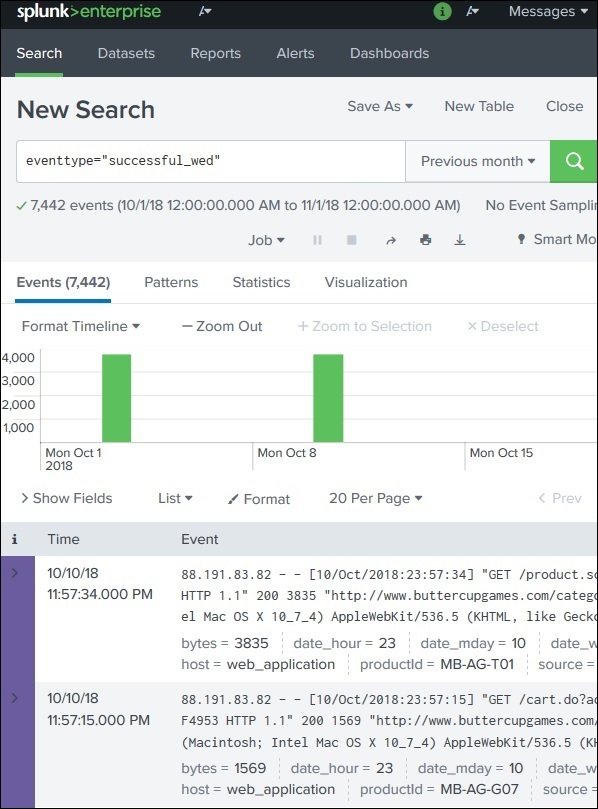

After creating an event type, you can use it in SPL queries.

Example:

Splunk automatically expands it to:

You can combine event types with other filters.

Example:

Result:

Matching events will appear highlighted with the chosen color.

✔ Simplifies complex searches

✔ Reusable search logic

✔ Improves search readability

✔ Helps categorize events

✔ Supports tagging and highlighting

| Feature | Event Type | Saved Search |

|---|---|---|

| Purpose | Categorize events | Save full search |

| Usage | Used inside searches | Run directly |

| Highlighting | Yes | No |

An Event Type is a saved search that groups events based on defined criteria.

Example:

Search condition:

Event Type created:

Usage:

This helps quickly identify and reuse specific types of events in Splunk searches.

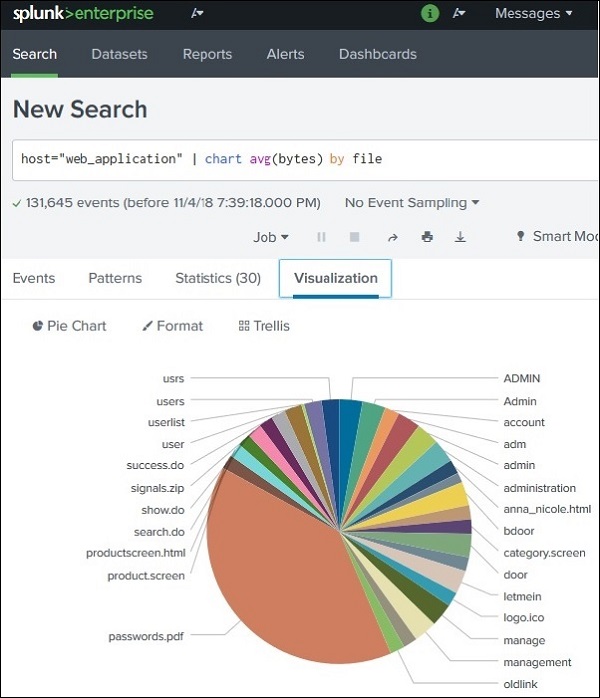

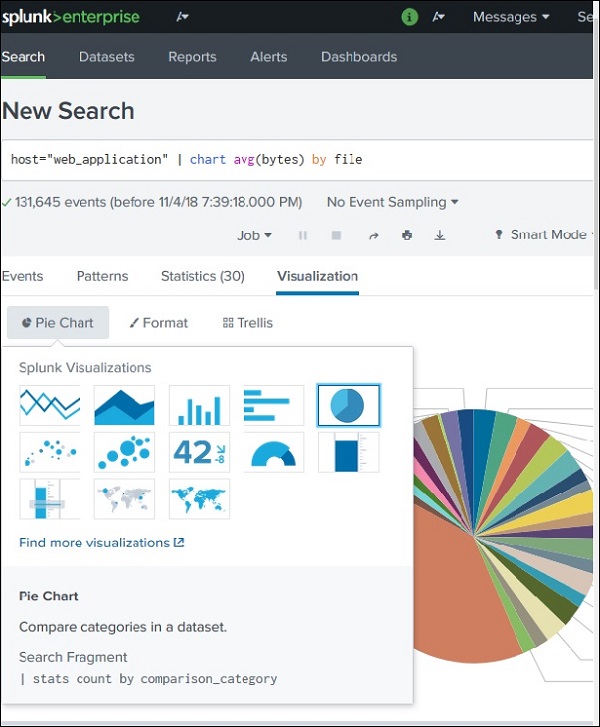

Splunk provides powerful visualization features that convert search results into graphical charts. These charts help users analyze trends, patterns, and statistics visually.

Charts are created from search queries that produce numerical or statistical results.

Example data source:

Example goal:

Find average file size (bytes) and display it as a chart.

Before creating a chart, the search must produce statistical output.

Example SPL query:

Result appears in the Statistics tab.

Example output:

| file | avg(bytes) |

|---|---|

| file1.html | 520 |

| file2.jpg | 1200 |

| file3.png | 980 |

This statistical data becomes the input for chart visualization.

Steps:

Splunk automatically creates a default chart, usually a Pie Chart.

Example visualization:

Splunk allows multiple chart types.

Common chart options include:

| Chart Type | Purpose |

|---|---|

| Pie Chart | Shows proportions |

| Bar Chart | Compares values |

| Column Chart | Displays grouped comparisons |

| Line Chart | Shows trends over time |

| Area Chart | Displays cumulative trends |

Example:

Switching from Pie Chart → Bar Chart makes file sizes easier to compare.

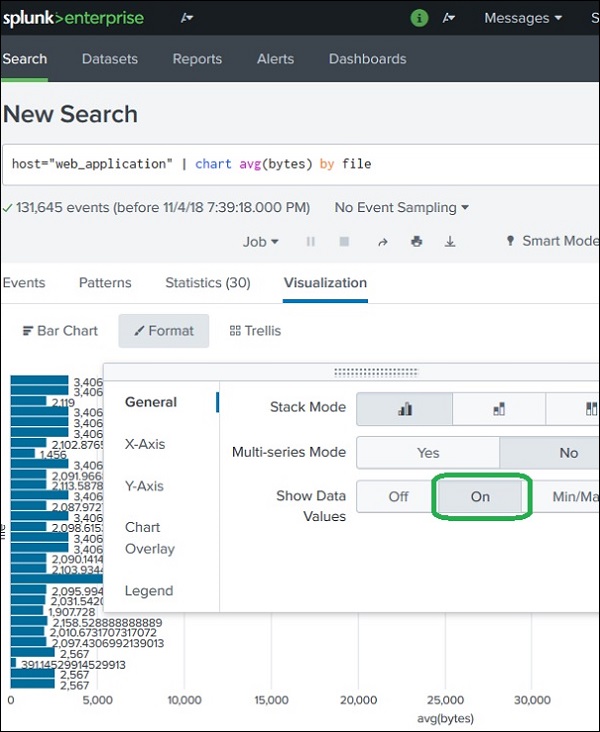

Splunk also allows customizing the chart appearance.

Click Format to modify chart settings.

Formatting options include:

Control labels and scale of:

Legends describe what each color or value represents.

Example:

Display actual numerical values on the chart.

Example:

Example:

Horizontal charts are often easier to read when many values exist.

Example search generating chart data:

Visualization output:

✔ Easy data visualization

✔ Identify trends quickly

✔ Improve dashboards

✔ Better data analysis

✔ Useful for reports and presentations

A Basic Chart in Splunk converts statistical search results into visual graphs.

Steps:

Example SPL:

This can be visualized as:

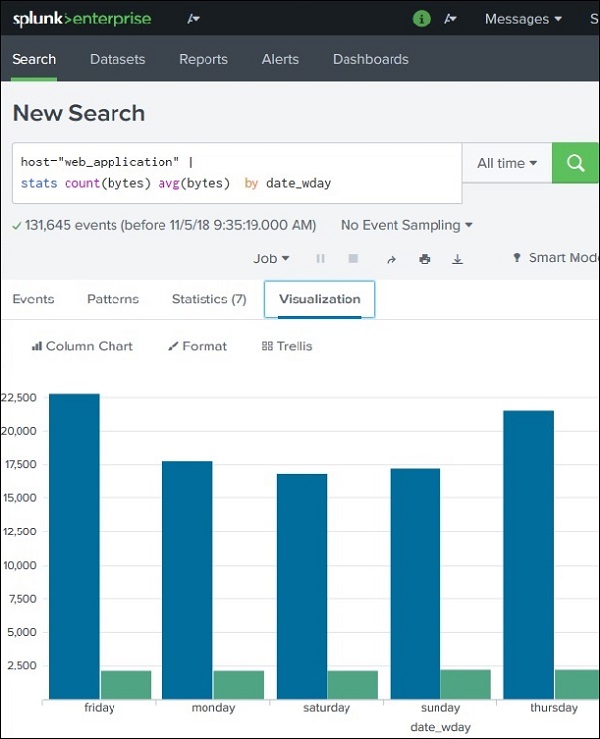

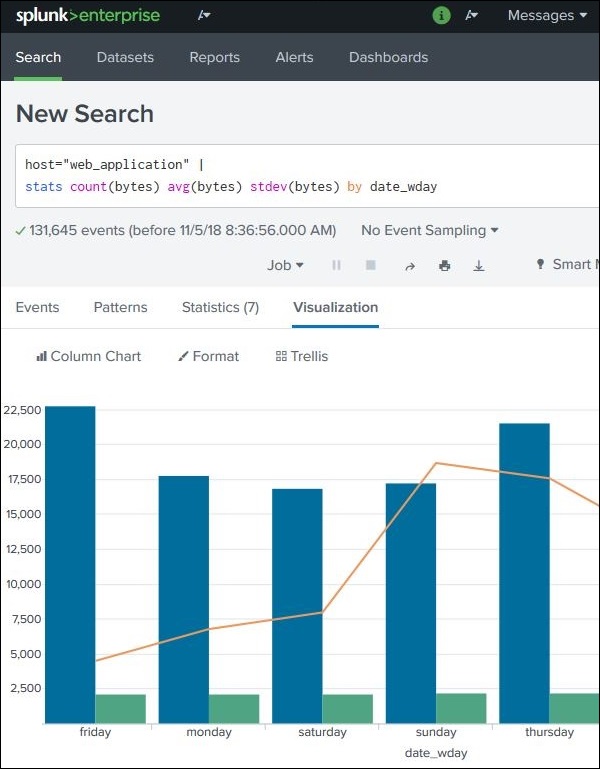

An Overlay Chart in Splunk is used to display one chart on top of another so that multiple metrics can be compared in the same visualization.

Typically:

This helps identify patterns, correlations, and trends between datasets.

Example comparison:

Suppose we want to analyze file sizes from web application logs across different days of the week.

We calculate:

1️⃣ Total bytes

2️⃣ Average bytes

3️⃣ Standard deviation of bytes

These metrics help understand file size distribution.

First create a chart with two metrics.

Example SPL query:

Example statistical output:

| weekday | total_bytes | avg_bytes |

|---|---|---|

| Monday | 12000 | 800 |

| Tuesday | 15000 | 900 |

| Wednesday | 17000 | 950 |

This data can be visualized as a bar chart.

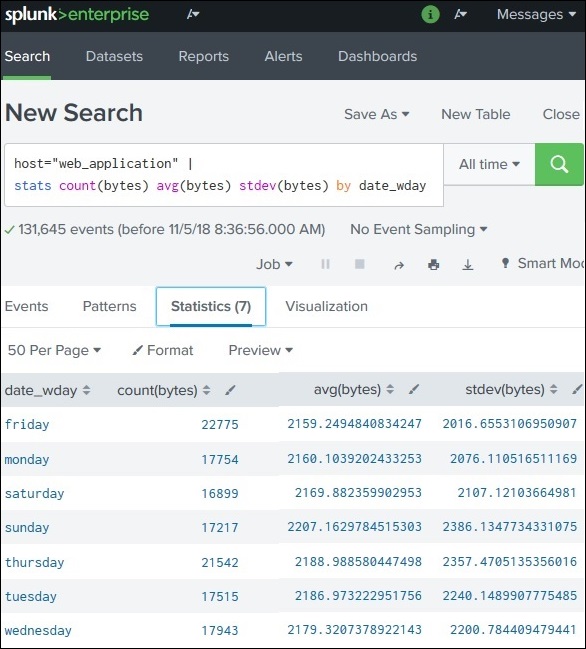

To create an overlay chart, add another statistical measure such as standard deviation.

Example updated query:

Now the statistics tab contains:

| weekday | sum(bytes) | avg(bytes) | stdev(bytes) |

|---|---|---|---|

| Monday | 12000 | 800 | 150 |

| Tuesday | 15000 | 900 | 200 |

| Wednesday | 17000 | 950 | 220 |

This extra field enables the overlay visualization.

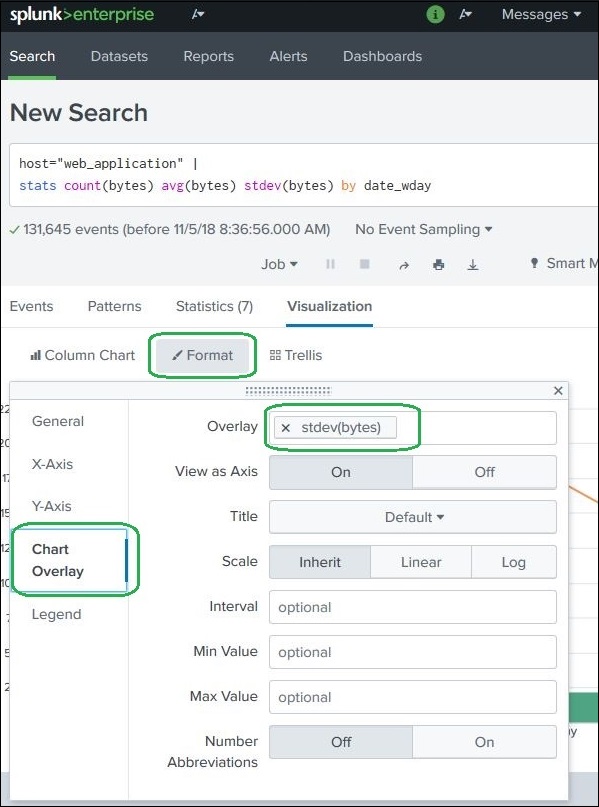

Steps:

A configuration window appears.

Choose the field to overlay.

Example:

Other optional settings include:

| Option | Purpose |

|---|---|

| Title | Chart title |

| Scale | Secondary axis scale |

| Min/Max | Axis value limits |

| Interval | Axis spacing |

Usually default settings work fine.

The final overlay chart typically shows:

This allows users to see:

Overlay charts are commonly used for:

| Scenario | Example |

|---|---|

| Performance Monitoring | CPU usage vs average CPU |

| Network Monitoring | Traffic vs packet loss |

| Security Monitoring | Login attempts vs anomaly score |

| Business Analytics | Sales vs average sales |

✔ Compare multiple metrics easily

✔ Identify trends quickly

✔ Detect anomalies

✔ Improve dashboard analytics

✔ Provide deeper data insights

An Overlay Chart in Splunk displays two charts together in one visualization.

Steps:

1️⃣ Create a chart with statistical values

2️⃣ Add a third metric

3️⃣ Use Visualization → Format → Chart Overlay

4️⃣ Select the overlay field

Example SPL query:

This helps compare main metrics with trend indicators in the same chart.

A Sparkline is a small, compact chart that shows trends over time inside a table cell.

Unlike normal charts, sparklines do not display axes or labels. They appear as tiny line graphs that show how a value changes over time.

They are useful for quickly understanding trends or fluctuations in data.

Example idea:

| File | Avg Bytes | Trend |

|---|---|---|

| file1.html | 800 | ▁▃▅▇ |

| file2.jpg | 1200 | ▂▆▃▇ |

The small graph indicates how the value changed over time.

Sparklines help:

They are commonly used in monitoring dashboards and reports.

First, run a search that produces statistical values.

Example SPL query:

Result example:

| file | avg(bytes) |

|---|---|

| file1.html | 820 |

| file2.jpg | 1200 |

| file3.png | 950 |

This statistical data will be used to create the sparkline.

To generate sparklines, use the sparkline() function with the stats command.

Example query:

Result:

| file | avg(bytes) | trend |

|---|---|---|

| file1.html | 820 | tiny graph |

| file2.jpg | 1200 | tiny graph |

| file3.png | 950 | tiny graph |

Each row now shows a mini trend graph representing changes in average bytes.

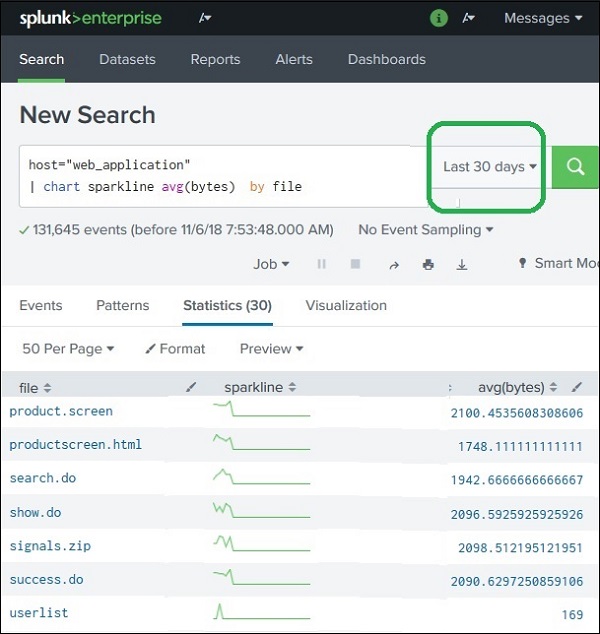

The sparkline graph depends on the selected time range.

Example:

The sparkline displays the trend for the entire dataset.

Only data from the last 30 days is used.

Effects:

Example SPL query with sparkline:

Explanation:

| Command | Purpose |

|---|---|

| stats | Generates statistical results |

| avg(bytes) | Calculates average file size |

| sparkline() | Generates mini trend chart |

✔ Shows trends in a compact format

✔ Works inside tables and dashboards

✔ No extra chart space required

✔ Useful for monitoring and comparisons

Sparklines are commonly used for:

| Use Case | Example |

|---|---|

| Server Monitoring | CPU usage trend |

| Network Monitoring | Traffic fluctuation |

| Security Monitoring | Login attempts over time |

| Business Analytics | Sales trend per product |

A Sparkline in Splunk is a small trend chart displayed inside a table cell.

Key points:

Example SPL:

This displays mini trend charts for each file.

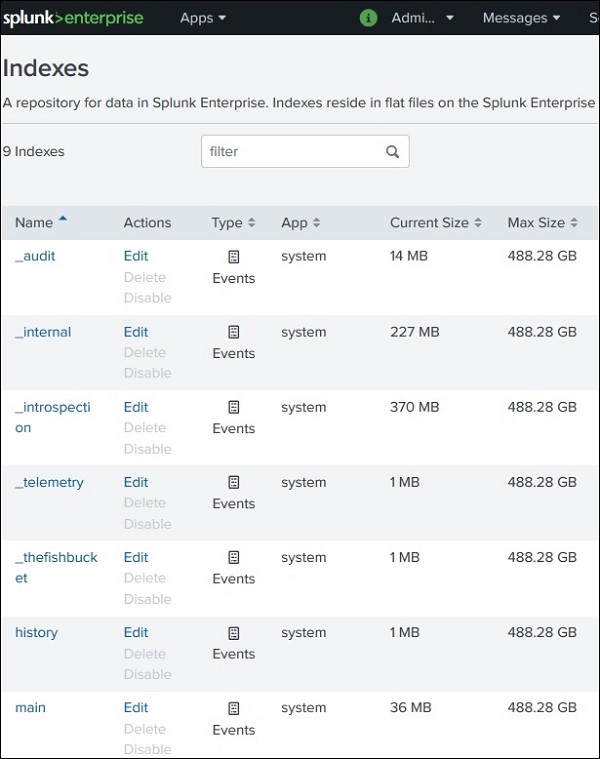

An Index in Splunk is a storage location where processed machine data is stored and organized for fast searching.

Indexing works similar to database indexing, where data is given structured references so searches can be executed quickly.

When data enters Splunk:

When Splunk is installed, it automatically creates three default indexes.

| Index Name | Purpose |

|---|---|

| main | Default index where most ingested data is stored |

| internal | Stores Splunk system logs and performance metrics |

| audit | Stores user activity and audit logs |

Example SPL:

This searches events stored in the main index.

Example:

This searches Splunk system logs.

The Indexer component in Splunk is responsible for:

So the flow is:

You can view available indexes in Splunk.

Steps:

This displays a list of:

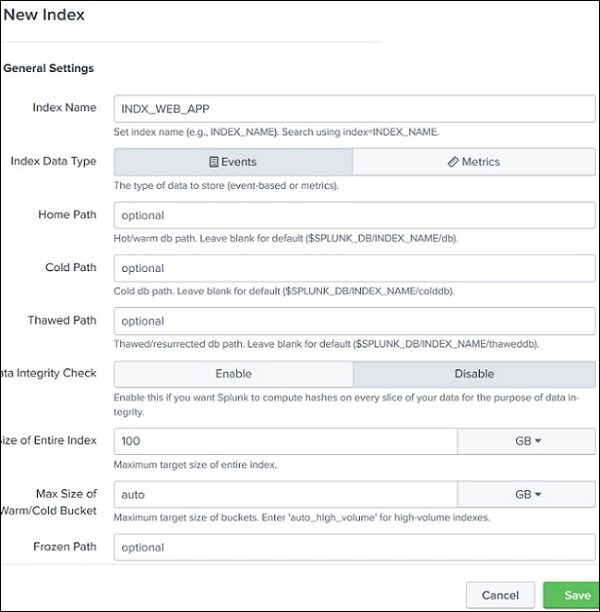

Sometimes you may want to separate different types of data into different indexes.

Example reasons:

Steps to create an index:

You will see a configuration screen.

| Field | Description |

|---|---|

| Index Name | Name of the new index |

| Storage Path | Location where data is stored |

| Max Size | Maximum storage allocation |

| Data Retention | Time period for storing data |

Example:

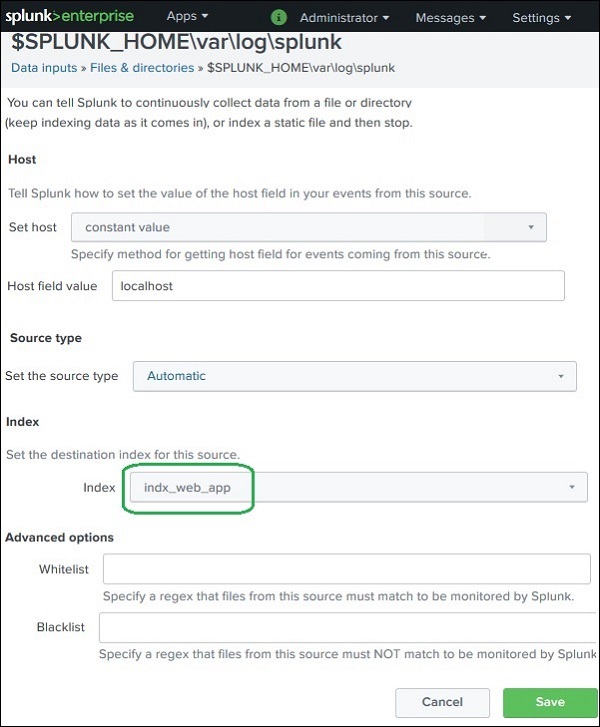

After creating an index, new data must be configured to use that index.

Steps:

Example:

Now all events from that data source will be stored in the new index.

Example SPL query:

This searches only the data stored in the custom index.

✔ Better data organization

✔ Faster searches

✔ Easier data management

✔ Separate storage for different log types

✔ Improved security control

Example:

| Index | Data Type |

|---|---|

| web_logs | Website logs |

| security_logs | Security events |

| system_logs | Server logs |

An Index in Splunk is a structured storage location for processed machine data.

Key points:

Example SPL:

This searches events stored in the main index.

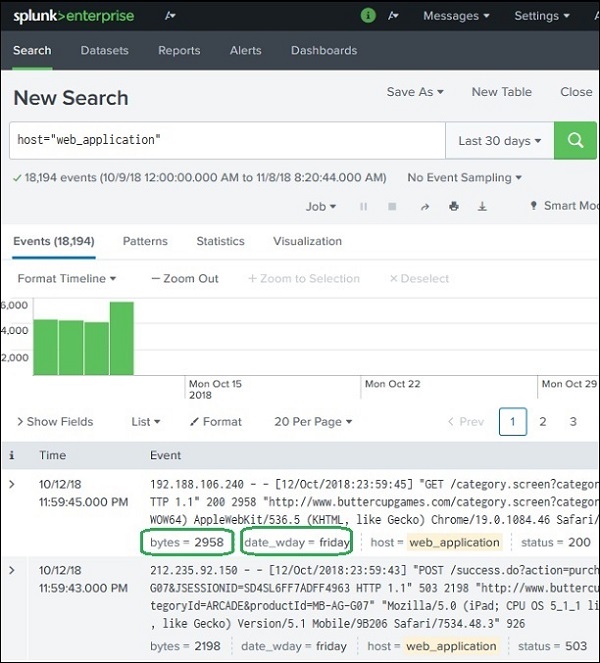

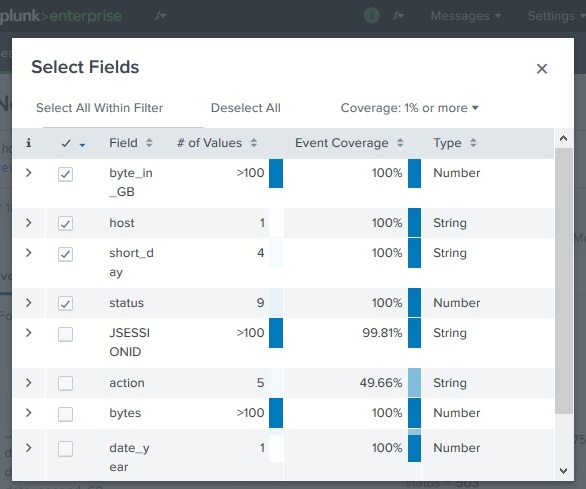

Calculated Fields in Splunk are new fields created by applying calculations or transformations to existing fields in events.

They are useful when you want to:

These calculations are usually done using the eval command in SPL.

Calculated fields help:

Example uses:

| Original Field | Calculated Field |

|---|---|

| bytes | bytes_in_GB |

| date_wday | short_day |

Suppose the web_application log contains the following fields:

| bytes | date_wday |

|---|---|

| 2048 | Wednesday |

| 4096 | Thursday |

| 1024 | Monday |

Goals:

1️⃣ Convert bytes → GB

2️⃣ Show only the first three characters of the weekday

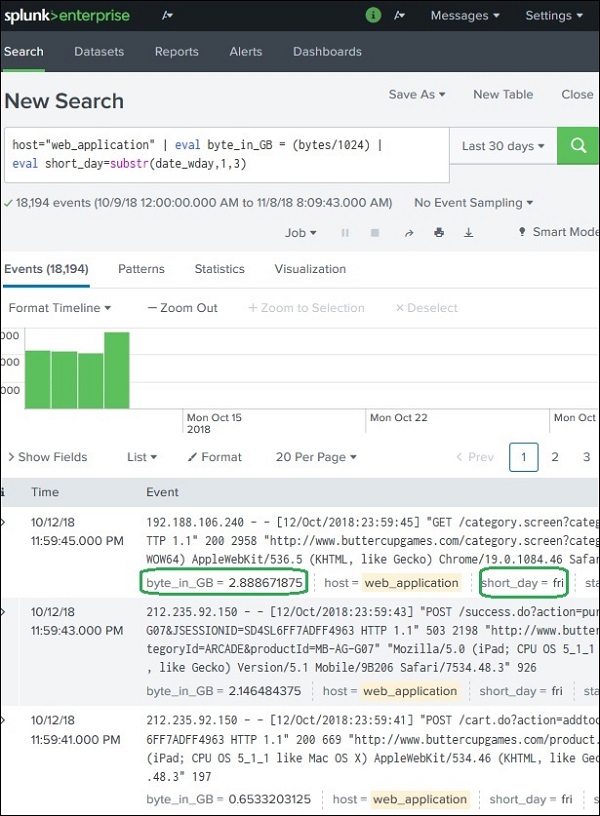

eval FunctionSplunk uses the eval command to create calculated fields.

To convert bytes to GB:

Full search example:

Result:

| bytes | byte_in_GB |

|---|---|

| 2048 | 2 |

| 4096 | 4 |

| 1024 | 1 |

To extract the first three characters from the date_wday field, use the substr() function.

Example search:

Result:

| date_wday | short_day |

|---|---|

| Wednesday | Wed |

| Thursday | Thu |

| Monday | Mon |

You can apply multiple calculated fields in the same search.

Example SPL:

Result:

| bytes | byte_in_GB | date_wday | short_day |

|---|---|---|---|

| 2048 | 2 | Wednesday | Wed |

| 4096 | 4 | Thursday | Thu |

After creating calculated fields:

byte_in_GB, short_day)| Function | Purpose |

|---|---|

| eval | Create calculated fields |

| substr() | Extract part of a string |

| round() | Round numeric values |

| len() | Get string length |

| tonumber() | Convert string to number |

Example:

✔ Transform raw data into meaningful values

✔ Perform calculations during search

✔ Create reusable analytical fields

✔ Improve data interpretation

A Calculated Field is a new field created from existing fields using calculations.

It is usually created using the eval command.

Example:

This produces new fields:

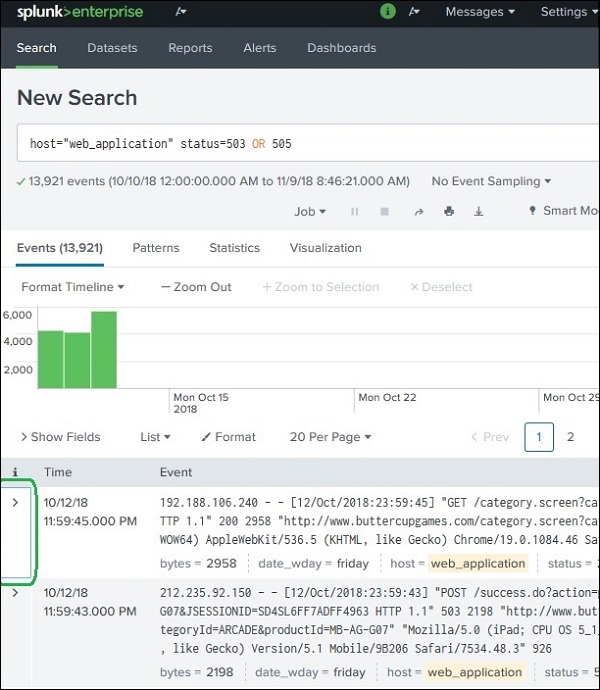

Tags are labels used to group specific field–value combinations in Splunk events.

They allow you to categorize events so that they can be searched easily with a single keyword instead of writing complex queries.

Tags are part of Splunk Knowledge Objects.

Tags help to:

Example:

Instead of searching multiple status codes:

You can create a tag called:

Then simply search:

Tags can be assigned to different Splunk fields such as:

| Field Type | Example |

|---|---|

| host | server01 |

| source | web_log |

| sourcetype | apache_access |

| event type | status events |

| field-value pairs | status=503 |

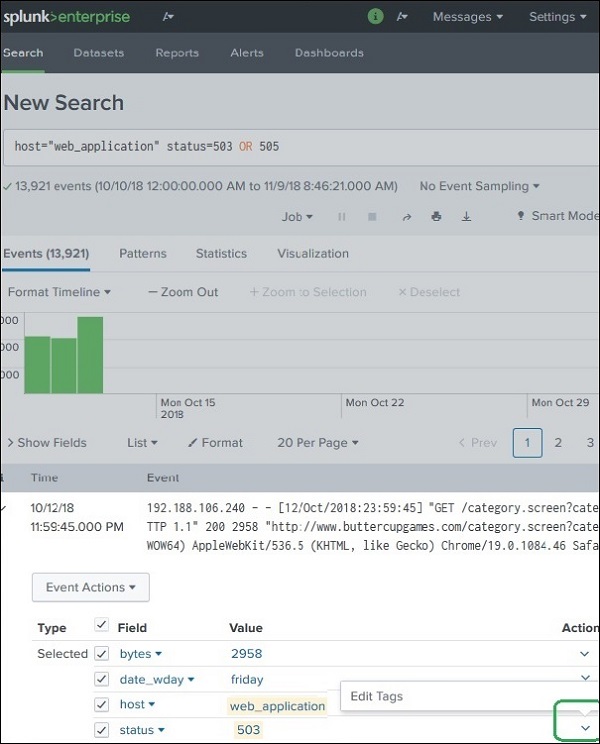

Suppose we want to group server error status codes.

Error status codes:

We assign them the tag:

So:

| Field | Value | Tag |

|---|---|---|

| status | 503 | server_error |

| status | 505 | server_error |

Example field:

Enter the tag name.

Example:

Apply this tag to:

You must repeat the step for each value.

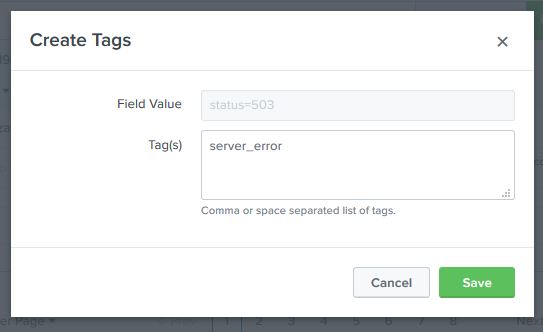

Once tags are created, searching becomes easier.

This will return events containing:

Even though the search does not explicitly mention them.

Tags make the query simpler and reusable.

| Feature | Tags | Fields |

|---|---|---|

| Purpose | Group field values | Store event data |

| Usage | Simplify searches | Extract event information |

| Example | server_error | status=503 |

✔ Simplifies complex searches

✔ Groups multiple field values

✔ Improves data categorization

✔ Helps in knowledge management

✔ Useful for dashboards and reports

A Tag in Splunk is a label assigned to field-value combinations to group similar events.

Example:

Tagged as:

Search query:

This returns all events that match those tagged values.

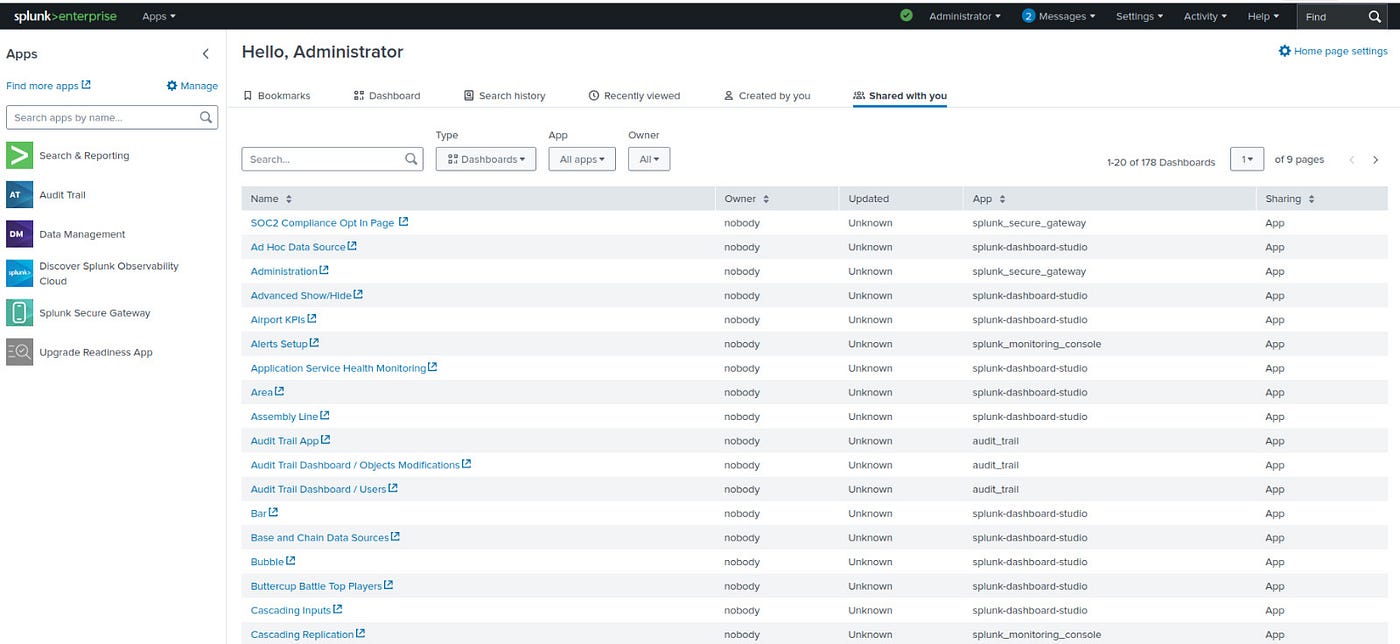

Splunk Apps are packages that extend the functionality of Splunk.

They contain configurations, dashboards, reports, searches, field extractions, and visualizations designed for specific use cases.

Apps help users analyze specific types of data quickly without building everything from scratch.

A Splunk App may contain several components:

| Component | Description |

|---|---|

| Dashboards | Visual displays of data |

| Reports | Saved searches with results |

| Alerts | Notifications triggered by conditions |

| Field Extractions | Extract fields from raw data |

| Data Models | Structured data representation |

| Lookups | External reference data |

These components work together to provide a complete monitoring or analysis solution.

Some commonly used apps include:

| App Name | Purpose |

|---|---|

| Splunk App for AWS | Monitor Amazon Web Services |

| Splunk App for Windows Infrastructure | Monitor Windows systems |

| Splunk App for Unix and Linux | Monitor Linux servers |

| Splunk Security Essentials | Security analytics |

| Splunk IT Service Intelligence (ITSI) | IT service monitoring |

These apps are usually downloaded from Splunkbase, the official marketplace.

There are two common ways to install apps.

Steps:

Steps:

.spl or .tgz packageYou can manage apps using:

From here you can:

Suppose you install Splunk App for AWS.

The app automatically provides:

This saves time compared to building everything manually.

✔ Ready-to-use dashboards and reports

✔ Faster deployment for monitoring systems

✔ Easy integration with external platforms

✔ Customizable for specific business needs

✔ Extend Splunk capabilities

| Type | Description |

|---|---|

| Technology Add-ons (TA) | Data collection and field extraction |

| Visualization Apps | Custom dashboards |

| Security Apps | Security monitoring |

| Infrastructure Apps | Server and cloud monitoring |

A Splunk App is a packaged extension that adds dashboards, reports, alerts, and configurations to Splunk.

Example:

They help users analyze specific data sources quickly and efficiently.

In Splunk, removing data means deleting indexed events from the system so they are no longer searchable.

However, Splunk does not normally delete individual events easily because data is stored in indexed buckets for fast searching. Instead, data removal usually happens by:

There are mainly three ways to remove data.

| Method | Description |

|---|---|

Using the delete command | Removes specific search results |

| Deleting an index | Removes all events stored in that index |

| Data retention policy | Automatically removes old data |

delete CommandSplunk provides a delete command that marks events as deleted.

This command:

Important points:

To use the delete command, the role must have can_delete permission.

If you want to remove all data, you can delete the entire index.

Steps:

Example index:

Deleting the index removes all events stored in that index.

This is the most common method in production environments.

Splunk automatically deletes old data based on:

Example configuration:

| Setting | Example |

|---|---|

| Maximum index size | 500 GB |

| Data retention | 30 days |

When the limit is reached:

Suppose an index contains web logs.

Search:

If you want to remove these events:

These events will no longer appear in searches.

⚠ Splunk discourages frequent manual deletion because:

Instead, organizations usually rely on:

✔ Saves storage space

✔ Maintains system performance

✔ Ensures compliance with data policies

✔ Prevents unnecessary data accumulation

Removing data in Splunk means deleting events from indexes.

Main methods:

1️⃣ Using delete command

2️⃣ Deleting an index

3️⃣ Using automatic data retention policies

A Custom Chart in Splunk is a user-defined visualization created to display data in a specific way that is not available in the default chart options.

Splunk provides basic charts such as:

But sometimes organizations need specialized visualizations, which can be created using custom chart modules or visualization apps.

Custom charts are used when:

Examples include:

To create a custom chart in Splunk:

First run a search that produces statistical data.

Example:

This produces a table like:

| file | avg(bytes) |

|---|---|

| file1 | 2048 |

| file2 | 4096 |

After the results appear:

Splunk will display a default chart such as pie chart or bar chart.

Use the Format option to modify:

| Setting | Purpose |

|---|---|

| Axis labels | Label X and Y axes |

| Legend | Display chart legends |

| Data labels | Show values on chart |

| Colors | Customize appearance |

| Chart title | Add meaningful title |

Splunk also supports custom visualization apps from Splunkbase.

Examples:

| Visualization | Use Case |

|---|---|

| Sankey Diagram | Data flow analysis |

| Heat Map | Density analysis |

| Bubble Chart | Multivariable comparison |

| Gauge Chart | Performance monitoring |

These visualizations can be installed via:

Example SPL:

Visualization result:

| Day | Avg Bytes |

|---|---|

| Monday | 3000 |

| Tuesday | 2500 |

| Wednesday | 3500 |

This can be displayed as:

✔ Better data visualization

✔ More meaningful dashboards

✔ Supports advanced analytics

✔ Improves decision making

✔ Highly customizable

A Custom Chart in Splunk is a specialized visualization created using search results and advanced chart configuration.

Steps:

1️⃣ Run search query

2️⃣ Open Visualization tab

3️⃣ Choose chart type

4️⃣ Customize using Format options

Example SPL:

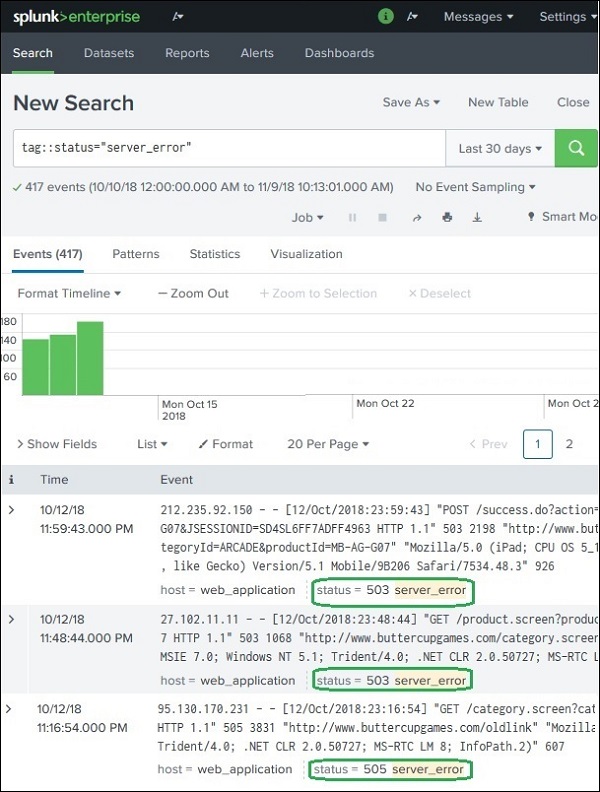

File Monitoring in Splunk means tracking files or directories for new data and automatically indexing that data.

When new data is written to a monitored file (such as log files), Splunk reads the new entries and adds them to its index so they can be searched and analyzed.

This is commonly used for application logs, server logs, and system logs.

Splunk uses a monitor input to watch files or directories.

Process:

Example:

Whenever new logs appear in this file, Splunk captures and indexes them.

Instead of monitoring a single file, you can monitor a complete directory.

Example:

If the directory contains subdirectories, Splunk can also monitor them recursively.

Example structure:

Splunk can monitor all files inside this directory tree.

Splunk can also monitor:

Condition:

✔ Splunk must have read permission for the directory.

Sometimes you only want to monitor specific files.

Splunk allows filtering using:

| Option | Purpose |

|---|---|

| Whitelist | Include only specific files |

| Blacklist | Exclude specific files |

Example:

Whitelist:

Blacklist:

This means Splunk monitors all .log files except debug.log.

If you disable or delete a monitor input:

So Splunk does not delete indexed data automatically.

Steps:

Go to:

Select:

This allows monitoring:

Example:

Splunk automatically detects:

Splunk automatically configures:

Then click Submit.

After configuration, Splunk begins indexing events.

Example search:

Result:

| Timestamp | Event |

|---|---|

| 10:01 | GET /index.html |

| 10:02 | POST /login |

If new log entries appear, Splunk updates the results automatically.

Splunk often monitors logs such as:

| Log Type | Example |

|---|---|

| Web server logs | Apache, Nginx |

| Application logs | Java, .NET |

| System logs | Linux syslog |

| Security logs | Firewall logs |

✔ Real-time log monitoring

✔ Automatic data indexing

✔ Easy integration with applications

✔ Supports large-scale log analysis

File Monitoring in Splunk allows the system to watch files or directories and index new data automatically.

Steps:

1️⃣ Add data

2️⃣ Choose Monitor

3️⃣ Select file or directory

4️⃣ Splunk starts indexing new data

Example monitored file:

Whenever new logs appear, Splunk captures and indexes them for analysis.

The sort command arranges search results in ascending or descending order based on one or more fields.

It works like ORDER BY in SQL.

Sort results in ascending order

This sorts events by bytes from smallest to largest.

Sort results in descending order

- means descending order.

| file | bytes |

|---|---|

| file1 | 200 |

| file2 | 400 |

| file3 | 800 |

The top command finds the most frequent values in a field.

It shows:

This shows which files appear most frequently in the logs.

| file | count | percent |

|---|---|---|

| index.html | 120 | 40% |

| login.html | 80 | 27% |

| about.html | 50 | 17% |

You can also limit the results.

This shows top 5 most frequent values.

The stats command performs statistical calculations on fields.

It is one of the most powerful commands in Splunk.

| Function | Purpose |

|---|---|

| count | Count events |

| sum | Add values |

| avg | Average |

| max | Maximum value |

| min | Minimum value |

Count events

Average file size

Average bytes by file

| file | avg(bytes) |

|---|---|

| file1 | 400 |

| file2 | 650 |

| file3 | 900 |

| Command | Purpose | Example Use |

|---|---|---|

| sort | Order search results | Sort logs by size |

| top | Find most frequent values | Top visited pages |

| stats | Perform calculations | Average response time |

What happens here:

| Command | What it Does |

|---|---|

| sort | Arranges results in ascending/descending order |

| top | Finds most frequent values |

| stats | Performs statistical calculations |